Static Public Member Functions | |

| static LineSegmentDetector | createLineSegmentDetector (int refine, double scale, double sigma_scale, double quant, double ang_th, double log_eps, double density_th, int n_bins) |

| Creates a smart pointer to a LineSegmentDetector object and initializes it. More... | |

| static LineSegmentDetector | createLineSegmentDetector (int refine, double scale, double sigma_scale, double quant, double ang_th, double log_eps, double density_th) |

| Creates a smart pointer to a LineSegmentDetector object and initializes it. More... | |

| static LineSegmentDetector | createLineSegmentDetector (int refine, double scale, double sigma_scale, double quant, double ang_th, double log_eps) |

| Creates a smart pointer to a LineSegmentDetector object and initializes it. More... | |

| static LineSegmentDetector | createLineSegmentDetector (int refine, double scale, double sigma_scale, double quant, double ang_th) |

| Creates a smart pointer to a LineSegmentDetector object and initializes it. More... | |

| static LineSegmentDetector | createLineSegmentDetector (int refine, double scale, double sigma_scale, double quant) |

| Creates a smart pointer to a LineSegmentDetector object and initializes it. More... | |

| static LineSegmentDetector | createLineSegmentDetector (int refine, double scale, double sigma_scale) |

| Creates a smart pointer to a LineSegmentDetector object and initializes it. More... | |

| static LineSegmentDetector | createLineSegmentDetector (int refine, double scale) |

| Creates a smart pointer to a LineSegmentDetector object and initializes it. More... | |

| static LineSegmentDetector | createLineSegmentDetector (int refine) |

| Creates a smart pointer to a LineSegmentDetector object and initializes it. More... | |

| static LineSegmentDetector | createLineSegmentDetector () |

| Creates a smart pointer to a LineSegmentDetector object and initializes it. More... | |

| static Mat | getGaussianKernel (int ksize, double sigma, int ktype) |

| Returns Gaussian filter coefficients. More... | |

| static Mat | getGaussianKernel (int ksize, double sigma) |

| Returns Gaussian filter coefficients. More... | |

| static void | getDerivKernels (Mat kx, Mat ky, int dx, int dy, int ksize, bool normalize, int ktype) |

| Returns filter coefficients for computing spatial image derivatives. More... | |

| static void | getDerivKernels (Mat kx, Mat ky, int dx, int dy, int ksize, bool normalize) |

| Returns filter coefficients for computing spatial image derivatives. More... | |

| static void | getDerivKernels (Mat kx, Mat ky, int dx, int dy, int ksize) |

| Returns filter coefficients for computing spatial image derivatives. More... | |

| static Mat | getGaborKernel (Size ksize, double sigma, double theta, double lambd, double gamma, double psi, int ktype) |

| Returns Gabor filter coefficients. More... | |

| static Mat | getGaborKernel (Size ksize, double sigma, double theta, double lambd, double gamma, double psi) |

| Returns Gabor filter coefficients. More... | |

| static Mat | getGaborKernel (Size ksize, double sigma, double theta, double lambd, double gamma) |

| Returns Gabor filter coefficients. More... | |

| static Mat | getStructuringElement (int shape, Size ksize, Point anchor) |

| Returns a structuring element of the specified size and shape for morphological operations. More... | |

| static Mat | getStructuringElement (int shape, Size ksize) |

| Returns a structuring element of the specified size and shape for morphological operations. More... | |

| static void | medianBlur (Mat src, Mat dst, int ksize) |

| Blurs an image using the median filter. More... | |

| static void | GaussianBlur (Mat src, Mat dst, Size ksize, double sigmaX, double sigmaY, int borderType) |

| Blurs an image using a Gaussian filter. More... | |

| static void | GaussianBlur (Mat src, Mat dst, Size ksize, double sigmaX, double sigmaY) |

| Blurs an image using a Gaussian filter. More... | |

| static void | GaussianBlur (Mat src, Mat dst, Size ksize, double sigmaX) |

| Blurs an image using a Gaussian filter. More... | |

| static void | bilateralFilter (Mat src, Mat dst, int d, double sigmaColor, double sigmaSpace, int borderType) |

| Applies the bilateral filter to an image. More... | |

| static void | bilateralFilter (Mat src, Mat dst, int d, double sigmaColor, double sigmaSpace) |

| Applies the bilateral filter to an image. More... | |

| static void | boxFilter (Mat src, Mat dst, int ddepth, Size ksize, Point anchor, bool normalize, int borderType) |

| Blurs an image using the box filter. More... | |

| static void | boxFilter (Mat src, Mat dst, int ddepth, Size ksize, Point anchor, bool normalize) |

| Blurs an image using the box filter. More... | |

| static void | boxFilter (Mat src, Mat dst, int ddepth, Size ksize, Point anchor) |

| Blurs an image using the box filter. More... | |

| static void | boxFilter (Mat src, Mat dst, int ddepth, Size ksize) |

| Blurs an image using the box filter. More... | |

| static void | sqrBoxFilter (Mat src, Mat dst, int ddepth, Size ksize, Point anchor, bool normalize, int borderType) |

| Calculates the normalized sum of squares of the pixel values overlapping the filter. More... | |

| static void | sqrBoxFilter (Mat src, Mat dst, int ddepth, Size ksize, Point anchor, bool normalize) |

| Calculates the normalized sum of squares of the pixel values overlapping the filter. More... | |

| static void | sqrBoxFilter (Mat src, Mat dst, int ddepth, Size ksize, Point anchor) |

| Calculates the normalized sum of squares of the pixel values overlapping the filter. More... | |

| static void | sqrBoxFilter (Mat src, Mat dst, int ddepth, Size ksize) |

| Calculates the normalized sum of squares of the pixel values overlapping the filter. More... | |

| static void | blur (Mat src, Mat dst, Size ksize, Point anchor, int borderType) |

| Blurs an image using the normalized box filter. More... | |

| static void | blur (Mat src, Mat dst, Size ksize, Point anchor) |

| Blurs an image using the normalized box filter. More... | |

| static void | blur (Mat src, Mat dst, Size ksize) |

| Blurs an image using the normalized box filter. More... | |

| static void | stackBlur (Mat src, Mat dst, Size ksize) |

| Blurs an image using the stackBlur. More... | |

| static void | filter2D (Mat src, Mat dst, int ddepth, Mat kernel, Point anchor, double delta, int borderType) |

| Convolves an image with the kernel. More... | |

| static void | filter2D (Mat src, Mat dst, int ddepth, Mat kernel, Point anchor, double delta) |

| Convolves an image with the kernel. More... | |

| static void | filter2D (Mat src, Mat dst, int ddepth, Mat kernel, Point anchor) |

| Convolves an image with the kernel. More... | |

| static void | filter2D (Mat src, Mat dst, int ddepth, Mat kernel) |

| Convolves an image with the kernel. More... | |

| static void | sepFilter2D (Mat src, Mat dst, int ddepth, Mat kernelX, Mat kernelY, Point anchor, double delta, int borderType) |

| Applies a separable linear filter to an image. More... | |

| static void | sepFilter2D (Mat src, Mat dst, int ddepth, Mat kernelX, Mat kernelY, Point anchor, double delta) |

| Applies a separable linear filter to an image. More... | |

| static void | sepFilter2D (Mat src, Mat dst, int ddepth, Mat kernelX, Mat kernelY, Point anchor) |

| Applies a separable linear filter to an image. More... | |

| static void | sepFilter2D (Mat src, Mat dst, int ddepth, Mat kernelX, Mat kernelY) |

| Applies a separable linear filter to an image. More... | |

| static void | Sobel (Mat src, Mat dst, int ddepth, int dx, int dy, int ksize, double scale, double delta, int borderType) |

| Calculates the first, second, third, or mixed image derivatives using an extended Sobel operator. More... | |

| static void | Sobel (Mat src, Mat dst, int ddepth, int dx, int dy, int ksize, double scale, double delta) |

| Calculates the first, second, third, or mixed image derivatives using an extended Sobel operator. More... | |

| static void | Sobel (Mat src, Mat dst, int ddepth, int dx, int dy, int ksize, double scale) |

| Calculates the first, second, third, or mixed image derivatives using an extended Sobel operator. More... | |

| static void | Sobel (Mat src, Mat dst, int ddepth, int dx, int dy, int ksize) |

| Calculates the first, second, third, or mixed image derivatives using an extended Sobel operator. More... | |

| static void | Sobel (Mat src, Mat dst, int ddepth, int dx, int dy) |

| Calculates the first, second, third, or mixed image derivatives using an extended Sobel operator. More... | |

| static void | spatialGradient (Mat src, Mat dx, Mat dy, int ksize, int borderType) |

| Calculates the first order image derivative in both x and y using a Sobel operator. More... | |

| static void | spatialGradient (Mat src, Mat dx, Mat dy, int ksize) |

| Calculates the first order image derivative in both x and y using a Sobel operator. More... | |

| static void | spatialGradient (Mat src, Mat dx, Mat dy) |

| Calculates the first order image derivative in both x and y using a Sobel operator. More... | |

| static void | Scharr (Mat src, Mat dst, int ddepth, int dx, int dy, double scale, double delta, int borderType) |

| Calculates the first x- or y- image derivative using Scharr operator. More... | |

| static void | Scharr (Mat src, Mat dst, int ddepth, int dx, int dy, double scale, double delta) |

| Calculates the first x- or y- image derivative using Scharr operator. More... | |

| static void | Scharr (Mat src, Mat dst, int ddepth, int dx, int dy, double scale) |

| Calculates the first x- or y- image derivative using Scharr operator. More... | |

| static void | Scharr (Mat src, Mat dst, int ddepth, int dx, int dy) |

| Calculates the first x- or y- image derivative using Scharr operator. More... | |

| static void | Laplacian (Mat src, Mat dst, int ddepth, int ksize, double scale, double delta, int borderType) |

| Calculates the Laplacian of an image. More... | |

| static void | Laplacian (Mat src, Mat dst, int ddepth, int ksize, double scale, double delta) |

| Calculates the Laplacian of an image. More... | |

| static void | Laplacian (Mat src, Mat dst, int ddepth, int ksize, double scale) |

| Calculates the Laplacian of an image. More... | |

| static void | Laplacian (Mat src, Mat dst, int ddepth, int ksize) |

| Calculates the Laplacian of an image. More... | |

| static void | Laplacian (Mat src, Mat dst, int ddepth) |

| Calculates the Laplacian of an image. More... | |

| static void | Canny (Mat image, Mat edges, double threshold1, double threshold2, int apertureSize, bool L2gradient) |

| Finds edges in an image using the Canny algorithm [Canny86] . More... | |

| static void | Canny (Mat image, Mat edges, double threshold1, double threshold2, int apertureSize) |

| Finds edges in an image using the Canny algorithm [Canny86] . More... | |

| static void | Canny (Mat image, Mat edges, double threshold1, double threshold2) |

| Finds edges in an image using the Canny algorithm [Canny86] . More... | |

| static void | Canny (Mat dx, Mat dy, Mat edges, double threshold1, double threshold2, bool L2gradient) |

| static void | Canny (Mat dx, Mat dy, Mat edges, double threshold1, double threshold2) |

| static void | cornerMinEigenVal (Mat src, Mat dst, int blockSize, int ksize, int borderType) |

| Calculates the minimal eigenvalue of gradient matrices for corner detection. More... | |

| static void | cornerMinEigenVal (Mat src, Mat dst, int blockSize, int ksize) |

| Calculates the minimal eigenvalue of gradient matrices for corner detection. More... | |

| static void | cornerMinEigenVal (Mat src, Mat dst, int blockSize) |

| Calculates the minimal eigenvalue of gradient matrices for corner detection. More... | |

| static void | cornerHarris (Mat src, Mat dst, int blockSize, int ksize, double k, int borderType) |

| Harris corner detector. More... | |

| static void | cornerHarris (Mat src, Mat dst, int blockSize, int ksize, double k) |

| Harris corner detector. More... | |

| static void | cornerEigenValsAndVecs (Mat src, Mat dst, int blockSize, int ksize, int borderType) |

| Calculates eigenvalues and eigenvectors of image blocks for corner detection. More... | |

| static void | cornerEigenValsAndVecs (Mat src, Mat dst, int blockSize, int ksize) |

| Calculates eigenvalues and eigenvectors of image blocks for corner detection. More... | |

| static void | preCornerDetect (Mat src, Mat dst, int ksize, int borderType) |

| Calculates a feature map for corner detection. More... | |

| static void | preCornerDetect (Mat src, Mat dst, int ksize) |

| Calculates a feature map for corner detection. More... | |

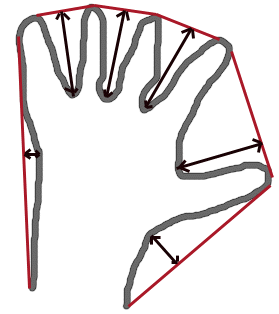

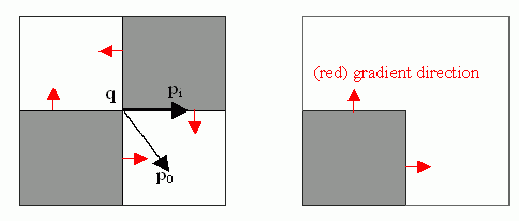

| static void | cornerSubPix (Mat image, Mat corners, Size winSize, Size zeroZone, TermCriteria criteria) |

| Refines the corner locations. More... | |

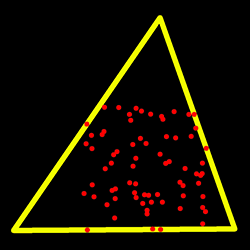

| static void | goodFeaturesToTrack (Mat image, MatOfPoint corners, int maxCorners, double qualityLevel, double minDistance, Mat mask, int blockSize, bool useHarrisDetector, double k) |

| Determines strong corners on an image. More... | |

| static void | goodFeaturesToTrack (Mat image, MatOfPoint corners, int maxCorners, double qualityLevel, double minDistance, Mat mask, int blockSize, bool useHarrisDetector) |

| Determines strong corners on an image. More... | |

| static void | goodFeaturesToTrack (Mat image, MatOfPoint corners, int maxCorners, double qualityLevel, double minDistance, Mat mask, int blockSize) |

| Determines strong corners on an image. More... | |

| static void | goodFeaturesToTrack (Mat image, MatOfPoint corners, int maxCorners, double qualityLevel, double minDistance, Mat mask) |

| Determines strong corners on an image. More... | |

| static void | goodFeaturesToTrack (Mat image, MatOfPoint corners, int maxCorners, double qualityLevel, double minDistance) |

| Determines strong corners on an image. More... | |

| static void | goodFeaturesToTrack (Mat image, MatOfPoint corners, int maxCorners, double qualityLevel, double minDistance, Mat mask, int blockSize, int gradientSize, bool useHarrisDetector, double k) |

| static void | goodFeaturesToTrack (Mat image, MatOfPoint corners, int maxCorners, double qualityLevel, double minDistance, Mat mask, int blockSize, int gradientSize, bool useHarrisDetector) |

| static void | goodFeaturesToTrack (Mat image, MatOfPoint corners, int maxCorners, double qualityLevel, double minDistance, Mat mask, int blockSize, int gradientSize) |

| static void | goodFeaturesToTrackWithQuality (Mat image, Mat corners, int maxCorners, double qualityLevel, double minDistance, Mat mask, Mat cornersQuality, int blockSize, int gradientSize, bool useHarrisDetector, double k) |

| Same as above, but returns also quality measure of the detected corners. More... | |

| static void | goodFeaturesToTrackWithQuality (Mat image, Mat corners, int maxCorners, double qualityLevel, double minDistance, Mat mask, Mat cornersQuality, int blockSize, int gradientSize, bool useHarrisDetector) |

| Same as above, but returns also quality measure of the detected corners. More... | |

| static void | goodFeaturesToTrackWithQuality (Mat image, Mat corners, int maxCorners, double qualityLevel, double minDistance, Mat mask, Mat cornersQuality, int blockSize, int gradientSize) |

| Same as above, but returns also quality measure of the detected corners. More... | |

| static void | goodFeaturesToTrackWithQuality (Mat image, Mat corners, int maxCorners, double qualityLevel, double minDistance, Mat mask, Mat cornersQuality, int blockSize) |

| Same as above, but returns also quality measure of the detected corners. More... | |

| static void | goodFeaturesToTrackWithQuality (Mat image, Mat corners, int maxCorners, double qualityLevel, double minDistance, Mat mask, Mat cornersQuality) |

| Same as above, but returns also quality measure of the detected corners. More... | |

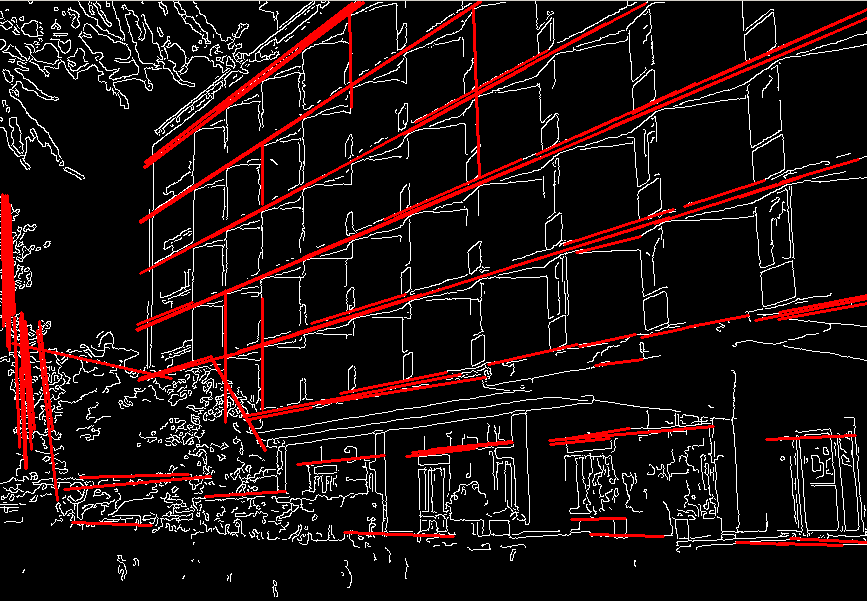

| static void | HoughLines (Mat image, Mat lines, double rho, double theta, int threshold, double srn, double stn, double min_theta, double max_theta) |

| Finds lines in a binary image using the standard Hough transform. More... | |

| static void | HoughLines (Mat image, Mat lines, double rho, double theta, int threshold, double srn, double stn, double min_theta) |

| Finds lines in a binary image using the standard Hough transform. More... | |

| static void | HoughLines (Mat image, Mat lines, double rho, double theta, int threshold, double srn, double stn) |

| Finds lines in a binary image using the standard Hough transform. More... | |

| static void | HoughLines (Mat image, Mat lines, double rho, double theta, int threshold, double srn) |

| Finds lines in a binary image using the standard Hough transform. More... | |

| static void | HoughLines (Mat image, Mat lines, double rho, double theta, int threshold) |

| Finds lines in a binary image using the standard Hough transform. More... | |

| static void | HoughLinesP (Mat image, Mat lines, double rho, double theta, int threshold, double minLineLength, double maxLineGap) |

| Finds line segments in a binary image using the probabilistic Hough transform. More... | |

| static void | HoughLinesP (Mat image, Mat lines, double rho, double theta, int threshold, double minLineLength) |

| Finds line segments in a binary image using the probabilistic Hough transform. More... | |

| static void | HoughLinesP (Mat image, Mat lines, double rho, double theta, int threshold) |

| Finds line segments in a binary image using the probabilistic Hough transform. More... | |

| static void | HoughLinesPointSet (Mat point, Mat lines, int lines_max, int threshold, double min_rho, double max_rho, double rho_step, double min_theta, double max_theta, double theta_step) |

| Finds lines in a set of points using the standard Hough transform. More... | |

| static void | HoughCircles (Mat image, Mat circles, int method, double dp, double minDist, double param1, double param2, int minRadius, int maxRadius) |

| Finds circles in a grayscale image using the Hough transform. More... | |

| static void | HoughCircles (Mat image, Mat circles, int method, double dp, double minDist, double param1, double param2, int minRadius) |

| Finds circles in a grayscale image using the Hough transform. More... | |

| static void | HoughCircles (Mat image, Mat circles, int method, double dp, double minDist, double param1, double param2) |

| Finds circles in a grayscale image using the Hough transform. More... | |

| static void | HoughCircles (Mat image, Mat circles, int method, double dp, double minDist, double param1) |

| Finds circles in a grayscale image using the Hough transform. More... | |

| static void | HoughCircles (Mat image, Mat circles, int method, double dp, double minDist) |

| Finds circles in a grayscale image using the Hough transform. More... | |

| static void | erode (Mat src, Mat dst, Mat kernel, Point anchor, int iterations, int borderType, Scalar borderValue) |

| Erodes an image by using a specific structuring element. More... | |

| static void | erode (Mat src, Mat dst, Mat kernel, Point anchor, int iterations, int borderType) |

| Erodes an image by using a specific structuring element. More... | |

| static void | erode (Mat src, Mat dst, Mat kernel, Point anchor, int iterations) |

| Erodes an image by using a specific structuring element. More... | |

| static void | erode (Mat src, Mat dst, Mat kernel, Point anchor) |

| Erodes an image by using a specific structuring element. More... | |

| static void | erode (Mat src, Mat dst, Mat kernel) |

| Erodes an image by using a specific structuring element. More... | |

| static void | dilate (Mat src, Mat dst, Mat kernel, Point anchor, int iterations, int borderType, Scalar borderValue) |

| Dilates an image by using a specific structuring element. More... | |

| static void | dilate (Mat src, Mat dst, Mat kernel, Point anchor, int iterations, int borderType) |

| Dilates an image by using a specific structuring element. More... | |

| static void | dilate (Mat src, Mat dst, Mat kernel, Point anchor, int iterations) |

| Dilates an image by using a specific structuring element. More... | |

| static void | dilate (Mat src, Mat dst, Mat kernel, Point anchor) |

| Dilates an image by using a specific structuring element. More... | |

| static void | dilate (Mat src, Mat dst, Mat kernel) |

| Dilates an image by using a specific structuring element. More... | |

| static void | morphologyEx (Mat src, Mat dst, int op, Mat kernel, Point anchor, int iterations, int borderType, Scalar borderValue) |

| Performs advanced morphological transformations. More... | |

| static void | morphologyEx (Mat src, Mat dst, int op, Mat kernel, Point anchor, int iterations, int borderType) |

| Performs advanced morphological transformations. More... | |

| static void | morphologyEx (Mat src, Mat dst, int op, Mat kernel, Point anchor, int iterations) |

| Performs advanced morphological transformations. More... | |

| static void | morphologyEx (Mat src, Mat dst, int op, Mat kernel, Point anchor) |

| Performs advanced morphological transformations. More... | |

| static void | morphologyEx (Mat src, Mat dst, int op, Mat kernel) |

| Performs advanced morphological transformations. More... | |

| static void | resize (Mat src, Mat dst, Size dsize, double fx, double fy, int interpolation) |

| Resizes an image. More... | |

| static void | resize (Mat src, Mat dst, Size dsize, double fx, double fy) |

| Resizes an image. More... | |

| static void | resize (Mat src, Mat dst, Size dsize, double fx) |

| Resizes an image. More... | |

| static void | resize (Mat src, Mat dst, Size dsize) |

| Resizes an image. More... | |

| static void | warpAffine (Mat src, Mat dst, Mat M, Size dsize, int flags, int borderMode, Scalar borderValue) |

| Applies an affine transformation to an image. More... | |

| static void | warpAffine (Mat src, Mat dst, Mat M, Size dsize, int flags, int borderMode) |

| Applies an affine transformation to an image. More... | |

| static void | warpAffine (Mat src, Mat dst, Mat M, Size dsize, int flags) |

| Applies an affine transformation to an image. More... | |

| static void | warpAffine (Mat src, Mat dst, Mat M, Size dsize) |

| Applies an affine transformation to an image. More... | |

| static void | warpPerspective (Mat src, Mat dst, Mat M, Size dsize, int flags, int borderMode, Scalar borderValue) |

| Applies a perspective transformation to an image. More... | |

| static void | warpPerspective (Mat src, Mat dst, Mat M, Size dsize, int flags, int borderMode) |

| Applies a perspective transformation to an image. More... | |

| static void | warpPerspective (Mat src, Mat dst, Mat M, Size dsize, int flags) |

| Applies a perspective transformation to an image. More... | |

| static void | warpPerspective (Mat src, Mat dst, Mat M, Size dsize) |

| Applies a perspective transformation to an image. More... | |

| static void | remap (Mat src, Mat dst, Mat map1, Mat map2, int interpolation, int borderMode, Scalar borderValue) |

| Applies a generic geometrical transformation to an image. More... | |

| static void | remap (Mat src, Mat dst, Mat map1, Mat map2, int interpolation, int borderMode) |

| Applies a generic geometrical transformation to an image. More... | |

| static void | remap (Mat src, Mat dst, Mat map1, Mat map2, int interpolation) |

| Applies a generic geometrical transformation to an image. More... | |

| static void | convertMaps (Mat map1, Mat map2, Mat dstmap1, Mat dstmap2, int dstmap1type, bool nninterpolation) |

| Converts image transformation maps from one representation to another. More... | |

| static void | convertMaps (Mat map1, Mat map2, Mat dstmap1, Mat dstmap2, int dstmap1type) |

| Converts image transformation maps from one representation to another. More... | |

| static Mat | getRotationMatrix2D (Point center, double angle, double scale) |

| Calculates an affine matrix of 2D rotation. More... | |

| static void | invertAffineTransform (Mat M, Mat iM) |

| Inverts an affine transformation. More... | |

| static Mat | getPerspectiveTransform (Mat src, Mat dst, int solveMethod) |

| Calculates a perspective transform from four pairs of the corresponding points. More... | |

| static Mat | getPerspectiveTransform (Mat src, Mat dst) |

| Calculates a perspective transform from four pairs of the corresponding points. More... | |

| static Mat | getAffineTransform (MatOfPoint2f src, MatOfPoint2f dst) |

| static void | getRectSubPix (Mat image, Size patchSize, Point center, Mat patch, int patchType) |

| Retrieves a pixel rectangle from an image with sub-pixel accuracy. More... | |

| static void | getRectSubPix (Mat image, Size patchSize, Point center, Mat patch) |

| Retrieves a pixel rectangle from an image with sub-pixel accuracy. More... | |

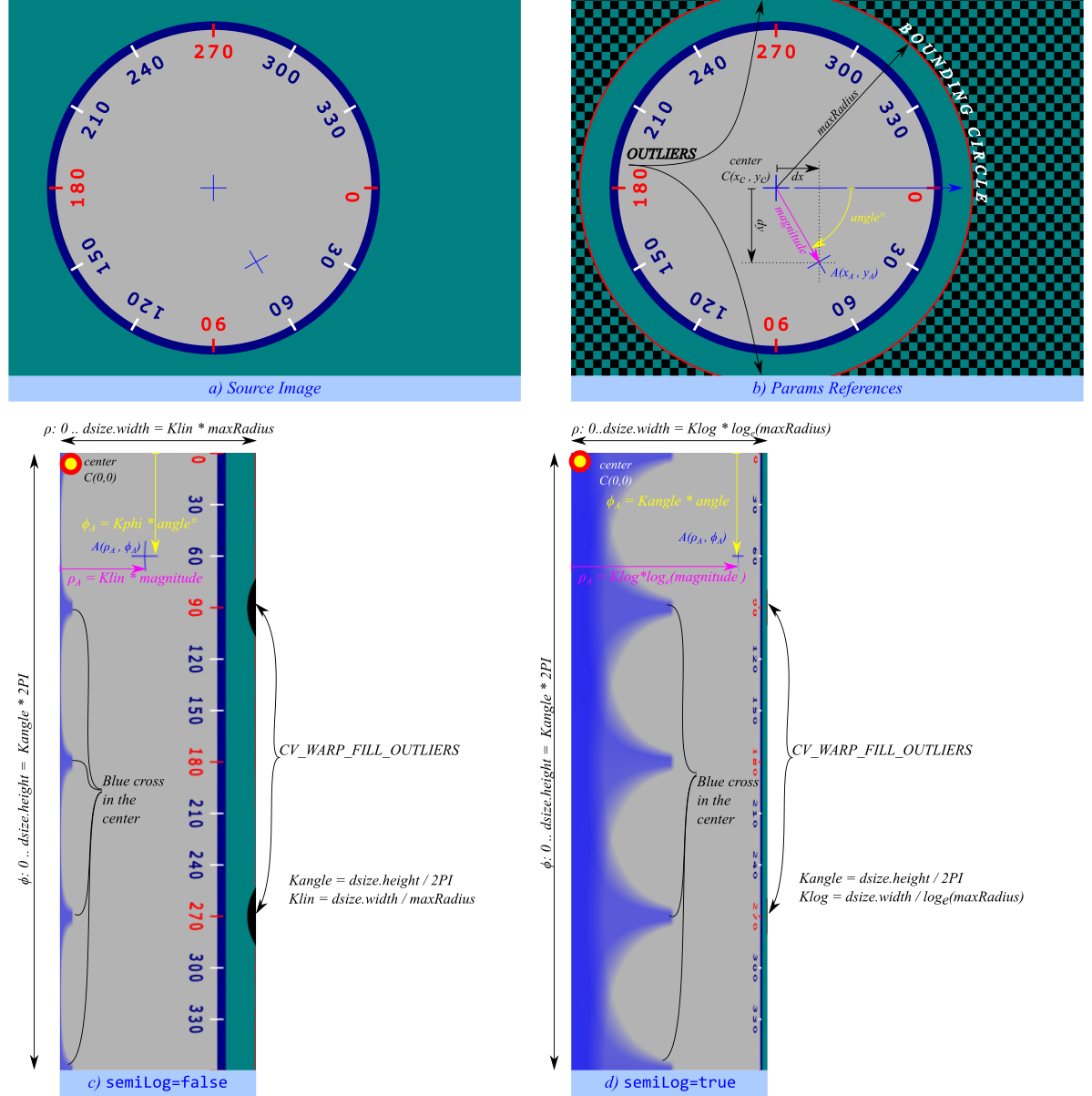

| static void | logPolar (Mat src, Mat dst, Point center, double M, int flags) |

| Remaps an image to semilog-polar coordinates space. More... | |

| static void | linearPolar (Mat src, Mat dst, Point center, double maxRadius, int flags) |

| Remaps an image to polar coordinates space. More... | |

| static void | warpPolar (Mat src, Mat dst, Size dsize, Point center, double maxRadius, int flags) |

| Remaps an image to polar or semilog-polar coordinates space. More... | |

| static void | integral3 (Mat src, Mat sum, Mat sqsum, Mat tilted, int sdepth, int sqdepth) |

| Calculates the integral of an image. More... | |

| static void | integral3 (Mat src, Mat sum, Mat sqsum, Mat tilted, int sdepth) |

| Calculates the integral of an image. More... | |

| static void | integral3 (Mat src, Mat sum, Mat sqsum, Mat tilted) |

| Calculates the integral of an image. More... | |

| static void | integral (Mat src, Mat sum, int sdepth) |

| static void | integral (Mat src, Mat sum) |

| static void | integral2 (Mat src, Mat sum, Mat sqsum, int sdepth, int sqdepth) |

| static void | integral2 (Mat src, Mat sum, Mat sqsum, int sdepth) |

| static void | integral2 (Mat src, Mat sum, Mat sqsum) |

| static void | accumulate (Mat src, Mat dst, Mat mask) |

| Adds an image to the accumulator image. More... | |

| static void | accumulate (Mat src, Mat dst) |

| Adds an image to the accumulator image. More... | |

| static void | accumulateSquare (Mat src, Mat dst, Mat mask) |

| Adds the square of a source image to the accumulator image. More... | |

| static void | accumulateSquare (Mat src, Mat dst) |

| Adds the square of a source image to the accumulator image. More... | |

| static void | accumulateProduct (Mat src1, Mat src2, Mat dst, Mat mask) |

| Adds the per-element product of two input images to the accumulator image. More... | |

| static void | accumulateProduct (Mat src1, Mat src2, Mat dst) |

| Adds the per-element product of two input images to the accumulator image. More... | |

| static void | accumulateWeighted (Mat src, Mat dst, double alpha, Mat mask) |

| Updates a running average. More... | |

| static void | accumulateWeighted (Mat src, Mat dst, double alpha) |

| Updates a running average. More... | |

| static Point | phaseCorrelate (Mat src1, Mat src2, Mat window, double[] response) |

| The function is used to detect translational shifts that occur between two images. More... | |

| static Point | phaseCorrelate (Mat src1, Mat src2, Mat window) |

| The function is used to detect translational shifts that occur between two images. More... | |

| static Point | phaseCorrelate (Mat src1, Mat src2) |

| The function is used to detect translational shifts that occur between two images. More... | |

| static void | createHanningWindow (Mat dst, Size winSize, int type) |

| This function computes a Hanning window coefficients in two dimensions. More... | |

| static void | divSpectrums (Mat a, Mat b, Mat c, int flags, bool conjB) |

| Performs the per-element division of the first Fourier spectrum by the second Fourier spectrum. More... | |

| static void | divSpectrums (Mat a, Mat b, Mat c, int flags) |

| Performs the per-element division of the first Fourier spectrum by the second Fourier spectrum. More... | |

| static double | threshold (Mat src, Mat dst, double thresh, double maxval, int type) |

| Applies a fixed-level threshold to each array element. More... | |

| static void | adaptiveThreshold (Mat src, Mat dst, double maxValue, int adaptiveMethod, int thresholdType, int blockSize, double C) |

| Applies an adaptive threshold to an array. More... | |

| static void | pyrDown (Mat src, Mat dst, Size dstsize, int borderType) |

| Blurs an image and downsamples it. More... | |

| static void | pyrDown (Mat src, Mat dst, Size dstsize) |

| Blurs an image and downsamples it. More... | |

| static void | pyrDown (Mat src, Mat dst) |

| Blurs an image and downsamples it. More... | |

| static void | pyrUp (Mat src, Mat dst, Size dstsize, int borderType) |

| Upsamples an image and then blurs it. More... | |

| static void | pyrUp (Mat src, Mat dst, Size dstsize) |

| Upsamples an image and then blurs it. More... | |

| static void | pyrUp (Mat src, Mat dst) |

| Upsamples an image and then blurs it. More... | |

| static void | calcHist (List< Mat > images, MatOfInt channels, Mat mask, Mat hist, MatOfInt histSize, MatOfFloat ranges, bool accumulate) |

| static void | calcHist (List< Mat > images, MatOfInt channels, Mat mask, Mat hist, MatOfInt histSize, MatOfFloat ranges) |

| static void | calcBackProject (List< Mat > images, MatOfInt channels, Mat hist, Mat dst, MatOfFloat ranges, double scale) |

| static double | compareHist (Mat H1, Mat H2, int method) |

| Compares two histograms. More... | |

| static void | equalizeHist (Mat src, Mat dst) |

| Equalizes the histogram of a grayscale image. More... | |

| static CLAHE | createCLAHE (double clipLimit, Size tileGridSize) |

| Creates a smart pointer to a cv::CLAHE class and initializes it. More... | |

| static CLAHE | createCLAHE (double clipLimit) |

| Creates a smart pointer to a cv::CLAHE class and initializes it. More... | |

| static CLAHE | createCLAHE () |

| Creates a smart pointer to a cv::CLAHE class and initializes it. More... | |

| static float | EMD (Mat signature1, Mat signature2, int distType, Mat cost, Mat flow) |

| Computes the "minimal work" distance between two weighted point configurations. More... | |

| static float | EMD (Mat signature1, Mat signature2, int distType, Mat cost) |

| Computes the "minimal work" distance between two weighted point configurations. More... | |

| static float | EMD (Mat signature1, Mat signature2, int distType) |

| Computes the "minimal work" distance between two weighted point configurations. More... | |

| static void | watershed (Mat image, Mat markers) |

| Performs a marker-based image segmentation using the watershed algorithm. More... | |

| static void | pyrMeanShiftFiltering (Mat src, Mat dst, double sp, double sr, int maxLevel, TermCriteria termcrit) |

| Performs initial step of meanshift segmentation of an image. More... | |

| static void | pyrMeanShiftFiltering (Mat src, Mat dst, double sp, double sr, int maxLevel) |

| Performs initial step of meanshift segmentation of an image. More... | |

| static void | pyrMeanShiftFiltering (Mat src, Mat dst, double sp, double sr) |

| Performs initial step of meanshift segmentation of an image. More... | |

| static void | grabCut (Mat img, Mat mask, Rect rect, Mat bgdModel, Mat fgdModel, int iterCount, int mode) |

| Runs the GrabCut algorithm. More... | |

| static void | grabCut (Mat img, Mat mask, Rect rect, Mat bgdModel, Mat fgdModel, int iterCount) |

| Runs the GrabCut algorithm. More... | |

| static void | distanceTransformWithLabels (Mat src, Mat dst, Mat labels, int distanceType, int maskSize, int labelType) |

| Calculates the distance to the closest zero pixel for each pixel of the source image. More... | |

| static void | distanceTransformWithLabels (Mat src, Mat dst, Mat labels, int distanceType, int maskSize) |

| Calculates the distance to the closest zero pixel for each pixel of the source image. More... | |

| static void | distanceTransform (Mat src, Mat dst, int distanceType, int maskSize, int dstType) |

| static void | distanceTransform (Mat src, Mat dst, int distanceType, int maskSize) |

| static int | floodFill (Mat image, Mat mask, Point seedPoint, Scalar newVal, Rect rect, Scalar loDiff, Scalar upDiff, int flags) |

| Fills a connected component with the given color. More... | |

| static int | floodFill (Mat image, Mat mask, Point seedPoint, Scalar newVal, Rect rect, Scalar loDiff, Scalar upDiff) |

| Fills a connected component with the given color. More... | |

| static int | floodFill (Mat image, Mat mask, Point seedPoint, Scalar newVal, Rect rect, Scalar loDiff) |

| Fills a connected component with the given color. More... | |

| static int | floodFill (Mat image, Mat mask, Point seedPoint, Scalar newVal, Rect rect) |

| Fills a connected component with the given color. More... | |

| static int | floodFill (Mat image, Mat mask, Point seedPoint, Scalar newVal) |

| Fills a connected component with the given color. More... | |

| static void | blendLinear (Mat src1, Mat src2, Mat weights1, Mat weights2, Mat dst) |

| static void | cvtColor (Mat src, Mat dst, int code, int dstCn) |

| Converts an image from one color space to another. More... | |

| static void | cvtColor (Mat src, Mat dst, int code) |

| Converts an image from one color space to another. More... | |

| static void | cvtColorTwoPlane (Mat src1, Mat src2, Mat dst, int code) |

| Converts an image from one color space to another where the source image is stored in two planes. More... | |

| static void | demosaicing (Mat src, Mat dst, int code, int dstCn) |

| main function for all demosaicing processes More... | |

| static void | demosaicing (Mat src, Mat dst, int code) |

| main function for all demosaicing processes More... | |

| static Moments | moments (Mat array, bool binaryImage) |

| Calculates all of the moments up to the third order of a polygon or rasterized shape. More... | |

| static Moments | moments (Mat array) |

| Calculates all of the moments up to the third order of a polygon or rasterized shape. More... | |

| static void | HuMoments (Moments m, Mat hu) |

| static void | matchTemplate (Mat image, Mat templ, Mat result, int method, Mat mask) |

| Compares a template against overlapped image regions. More... | |

| static void | matchTemplate (Mat image, Mat templ, Mat result, int method) |

| Compares a template against overlapped image regions. More... | |

| static int | connectedComponentsWithAlgorithm (Mat image, Mat labels, int connectivity, int ltype, int ccltype) |

| computes the connected components labeled image of boolean image More... | |

| static int | connectedComponents (Mat image, Mat labels, int connectivity, int ltype) |

| static int | connectedComponents (Mat image, Mat labels, int connectivity) |

| static int | connectedComponents (Mat image, Mat labels) |

| static int | connectedComponentsWithStatsWithAlgorithm (Mat image, Mat labels, Mat stats, Mat centroids, int connectivity, int ltype, int ccltype) |

| computes the connected components labeled image of boolean image and also produces a statistics output for each label More... | |

| static int | connectedComponentsWithStats (Mat image, Mat labels, Mat stats, Mat centroids, int connectivity, int ltype) |

| static int | connectedComponentsWithStats (Mat image, Mat labels, Mat stats, Mat centroids, int connectivity) |

| static int | connectedComponentsWithStats (Mat image, Mat labels, Mat stats, Mat centroids) |

| static void | findContours (Mat image, List< MatOfPoint > contours, Mat hierarchy, int mode, int method, Point offset) |

| Finds contours in a binary image. More... | |

| static void | findContours (Mat image, List< MatOfPoint > contours, Mat hierarchy, int mode, int method) |

| Finds contours in a binary image. More... | |

| static void | findContoursLinkRuns (Mat image, List< Mat > contours, Mat hierarchy) |

| static void | findContoursLinkRuns (Mat image, List< Mat > contours) |

| static void | approxPolyDP (MatOfPoint2f curve, MatOfPoint2f approxCurve, double epsilon, bool closed) |

| Approximates a polygonal curve(s) with the specified precision. More... | |

| static double | arcLength (MatOfPoint2f curve, bool closed) |

| Calculates a contour perimeter or a curve length. More... | |

| static Rect | boundingRect (Mat array) |

| Calculates the up-right bounding rectangle of a point set or non-zero pixels of gray-scale image. More... | |

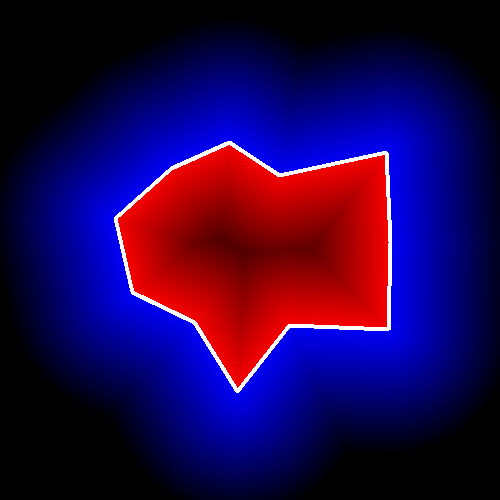

| static double | contourArea (Mat contour, bool oriented) |

| Calculates a contour area. More... | |

| static double | contourArea (Mat contour) |

| Calculates a contour area. More... | |

| static RotatedRect | minAreaRect (MatOfPoint2f points) |

| Finds a rotated rectangle of the minimum area enclosing the input 2D point set. More... | |

| static void | boxPoints (RotatedRect box, Mat points) |

| Finds the four vertices of a rotated rect. Useful to draw the rotated rectangle. More... | |

| static void | minEnclosingCircle (MatOfPoint2f points, Point center, float[] radius) |

| Finds a circle of the minimum area enclosing a 2D point set. More... | |

| static double | minEnclosingTriangle (Mat points, Mat triangle) |

| Finds a triangle of minimum area enclosing a 2D point set and returns its area. More... | |

| static double | matchShapes (Mat contour1, Mat contour2, int method, double parameter) |

| Compares two shapes. More... | |

| static void | convexHull (MatOfPoint points, MatOfInt hull, bool clockwise) |

| Finds the convex hull of a point set. More... | |

| static void | convexHull (MatOfPoint points, MatOfInt hull) |

| Finds the convex hull of a point set. More... | |

| static void | convexityDefects (MatOfPoint contour, MatOfInt convexhull, MatOfInt4 convexityDefects) |

| Finds the convexity defects of a contour. More... | |

| static bool | isContourConvex (MatOfPoint contour) |

| Tests a contour convexity. More... | |

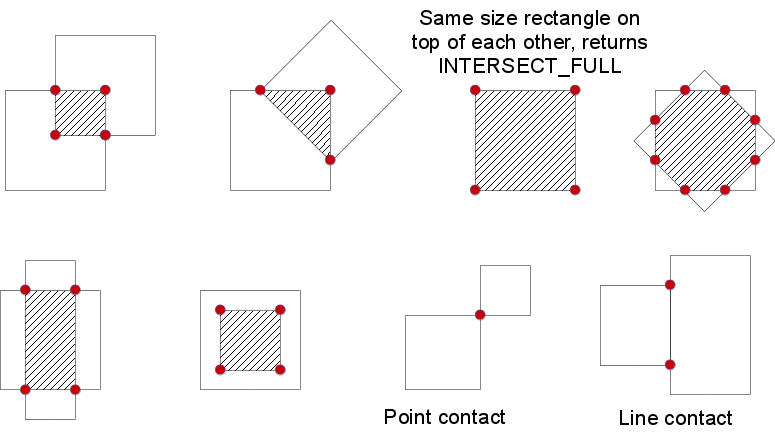

| static float | intersectConvexConvex (Mat p1, Mat p2, Mat p12, bool handleNested) |

| Finds intersection of two convex polygons. More... | |

| static float | intersectConvexConvex (Mat p1, Mat p2, Mat p12) |

| Finds intersection of two convex polygons. More... | |

| static RotatedRect | fitEllipse (MatOfPoint2f points) |

| Fits an ellipse around a set of 2D points. More... | |

| static RotatedRect | fitEllipseAMS (Mat points) |

| Fits an ellipse around a set of 2D points. More... | |

| static RotatedRect | fitEllipseDirect (Mat points) |

| Fits an ellipse around a set of 2D points. More... | |

| static void | fitLine (Mat points, Mat line, int distType, double param, double reps, double aeps) |

| Fits a line to a 2D or 3D point set. More... | |

| static double | pointPolygonTest (MatOfPoint2f contour, Point pt, bool measureDist) |

| Performs a point-in-contour test. More... | |

| static int | rotatedRectangleIntersection (RotatedRect rect1, RotatedRect rect2, Mat intersectingRegion) |

| Finds out if there is any intersection between two rotated rectangles. More... | |

| static GeneralizedHoughBallard | createGeneralizedHoughBallard () |

| Creates a smart pointer to a cv::GeneralizedHoughBallard class and initializes it. More... | |

| static GeneralizedHoughGuil | createGeneralizedHoughGuil () |

| Creates a smart pointer to a cv::GeneralizedHoughGuil class and initializes it. More... | |

| static void | applyColorMap (Mat src, Mat dst, int colormap) |

| Applies a GNU Octave/MATLAB equivalent colormap on a given image. More... | |

| static void | applyColorMap (Mat src, Mat dst, Mat userColor) |

| Applies a user colormap on a given image. More... | |

| static void | line (Mat img, Point pt1, Point pt2, Scalar color, int thickness, int lineType, int shift) |

| Draws a line segment connecting two points. More... | |

| static void | line (Mat img, Point pt1, Point pt2, Scalar color, int thickness, int lineType) |

| Draws a line segment connecting two points. More... | |

| static void | line (Mat img, Point pt1, Point pt2, Scalar color, int thickness) |

| Draws a line segment connecting two points. More... | |

| static void | line (Mat img, Point pt1, Point pt2, Scalar color) |

| Draws a line segment connecting two points. More... | |

| static void | arrowedLine (Mat img, Point pt1, Point pt2, Scalar color, int thickness, int line_type, int shift, double tipLength) |

| Draws an arrow segment pointing from the first point to the second one. More... | |

| static void | arrowedLine (Mat img, Point pt1, Point pt2, Scalar color, int thickness, int line_type, int shift) |

| Draws an arrow segment pointing from the first point to the second one. More... | |

| static void | arrowedLine (Mat img, Point pt1, Point pt2, Scalar color, int thickness, int line_type) |

| Draws an arrow segment pointing from the first point to the second one. More... | |

| static void | arrowedLine (Mat img, Point pt1, Point pt2, Scalar color, int thickness) |

| Draws an arrow segment pointing from the first point to the second one. More... | |

| static void | arrowedLine (Mat img, Point pt1, Point pt2, Scalar color) |

| Draws an arrow segment pointing from the first point to the second one. More... | |

| static void | rectangle (Mat img, Point pt1, Point pt2, Scalar color, int thickness, int lineType, int shift) |

| Draws a simple, thick, or filled up-right rectangle. More... | |

| static void | rectangle (Mat img, Point pt1, Point pt2, Scalar color, int thickness, int lineType) |

| Draws a simple, thick, or filled up-right rectangle. More... | |

| static void | rectangle (Mat img, Point pt1, Point pt2, Scalar color, int thickness) |

| Draws a simple, thick, or filled up-right rectangle. More... | |

| static void | rectangle (Mat img, Point pt1, Point pt2, Scalar color) |

| Draws a simple, thick, or filled up-right rectangle. More... | |

| static void | rectangle (Mat img, Rect rec, Scalar color, int thickness, int lineType, int shift) |

| static void | rectangle (Mat img, Rect rec, Scalar color, int thickness, int lineType) |

| static void | rectangle (Mat img, Rect rec, Scalar color, int thickness) |

| static void | rectangle (Mat img, Rect rec, Scalar color) |

| static void | circle (Mat img, Point center, int radius, Scalar color, int thickness, int lineType, int shift) |

| Draws a circle. More... | |

| static void | circle (Mat img, Point center, int radius, Scalar color, int thickness, int lineType) |

| Draws a circle. More... | |

| static void | circle (Mat img, Point center, int radius, Scalar color, int thickness) |

| Draws a circle. More... | |

| static void | circle (Mat img, Point center, int radius, Scalar color) |

| Draws a circle. More... | |

| static void | ellipse (Mat img, Point center, Size axes, double angle, double startAngle, double endAngle, Scalar color, int thickness, int lineType, int shift) |

| Draws a simple or thick elliptic arc or fills an ellipse sector. More... | |

| static void | ellipse (Mat img, Point center, Size axes, double angle, double startAngle, double endAngle, Scalar color, int thickness, int lineType) |

| Draws a simple or thick elliptic arc or fills an ellipse sector. More... | |

| static void | ellipse (Mat img, Point center, Size axes, double angle, double startAngle, double endAngle, Scalar color, int thickness) |

| Draws a simple or thick elliptic arc or fills an ellipse sector. More... | |

| static void | ellipse (Mat img, Point center, Size axes, double angle, double startAngle, double endAngle, Scalar color) |

| Draws a simple or thick elliptic arc or fills an ellipse sector. More... | |

| static void | ellipse (Mat img, RotatedRect box, Scalar color, int thickness, int lineType) |

| static void | ellipse (Mat img, RotatedRect box, Scalar color, int thickness) |

| static void | ellipse (Mat img, RotatedRect box, Scalar color) |

| static void | drawMarker (Mat img, Point position, Scalar color, int markerType, int markerSize, int thickness, int line_type) |

| Draws a marker on a predefined position in an image. More... | |

| static void | drawMarker (Mat img, Point position, Scalar color, int markerType, int markerSize, int thickness) |

| Draws a marker on a predefined position in an image. More... | |

| static void | drawMarker (Mat img, Point position, Scalar color, int markerType, int markerSize) |

| Draws a marker on a predefined position in an image. More... | |

| static void | drawMarker (Mat img, Point position, Scalar color, int markerType) |

| Draws a marker on a predefined position in an image. More... | |

| static void | drawMarker (Mat img, Point position, Scalar color) |

| Draws a marker on a predefined position in an image. More... | |

| static void | fillConvexPoly (Mat img, MatOfPoint points, Scalar color, int lineType, int shift) |

| Fills a convex polygon. More... | |

| static void | fillConvexPoly (Mat img, MatOfPoint points, Scalar color, int lineType) |

| Fills a convex polygon. More... | |

| static void | fillConvexPoly (Mat img, MatOfPoint points, Scalar color) |

| Fills a convex polygon. More... | |

| static void | fillPoly (Mat img, List< MatOfPoint > pts, Scalar color, int lineType, int shift, Point offset) |

| Fills the area bounded by one or more polygons. More... | |

| static void | fillPoly (Mat img, List< MatOfPoint > pts, Scalar color, int lineType, int shift) |

| Fills the area bounded by one or more polygons. More... | |

| static void | fillPoly (Mat img, List< MatOfPoint > pts, Scalar color, int lineType) |

| Fills the area bounded by one or more polygons. More... | |

| static void | fillPoly (Mat img, List< MatOfPoint > pts, Scalar color) |

| Fills the area bounded by one or more polygons. More... | |

| static void | polylines (Mat img, List< MatOfPoint > pts, bool isClosed, Scalar color, int thickness, int lineType, int shift) |

| Draws several polygonal curves. More... | |

| static void | polylines (Mat img, List< MatOfPoint > pts, bool isClosed, Scalar color, int thickness, int lineType) |

| Draws several polygonal curves. More... | |

| static void | polylines (Mat img, List< MatOfPoint > pts, bool isClosed, Scalar color, int thickness) |

| Draws several polygonal curves. More... | |

| static void | polylines (Mat img, List< MatOfPoint > pts, bool isClosed, Scalar color) |

| Draws several polygonal curves. More... | |

| static void | drawContours (Mat image, List< MatOfPoint > contours, int contourIdx, Scalar color, int thickness, int lineType, Mat hierarchy, int maxLevel, Point offset) |

| Draws contours outlines or filled contours. More... | |

| static void | drawContours (Mat image, List< MatOfPoint > contours, int contourIdx, Scalar color, int thickness, int lineType, Mat hierarchy, int maxLevel) |

| Draws contours outlines or filled contours. More... | |

| static void | drawContours (Mat image, List< MatOfPoint > contours, int contourIdx, Scalar color, int thickness, int lineType, Mat hierarchy) |

| Draws contours outlines or filled contours. More... | |

| static void | drawContours (Mat image, List< MatOfPoint > contours, int contourIdx, Scalar color, int thickness, int lineType) |

| Draws contours outlines or filled contours. More... | |

| static void | drawContours (Mat image, List< MatOfPoint > contours, int contourIdx, Scalar color, int thickness) |

| Draws contours outlines or filled contours. More... | |

| static void | drawContours (Mat image, List< MatOfPoint > contours, int contourIdx, Scalar color) |

| Draws contours outlines or filled contours. More... | |

| static bool | clipLine (Rect imgRect, Point pt1, Point pt2) |

| static void | ellipse2Poly (Point center, Size axes, int angle, int arcStart, int arcEnd, int delta, MatOfPoint pts) |

| Approximates an elliptic arc with a polyline. More... | |

| static void | putText (Mat img, string text, Point org, int fontFace, double fontScale, Scalar color, int thickness, int lineType, bool bottomLeftOrigin) |

| Draws a text string. More... | |

| static void | putText (Mat img, string text, Point org, int fontFace, double fontScale, Scalar color, int thickness, int lineType) |

| Draws a text string. More... | |

| static void | putText (Mat img, string text, Point org, int fontFace, double fontScale, Scalar color, int thickness) |

| Draws a text string. More... | |

| static void | putText (Mat img, string text, Point org, int fontFace, double fontScale, Scalar color) |

| Draws a text string. More... | |

| static double | getFontScaleFromHeight (int fontFace, int pixelHeight, int thickness) |

| Calculates the font-specific size to use to achieve a given height in pixels. More... | |

| static double | getFontScaleFromHeight (int fontFace, int pixelHeight) |

| Calculates the font-specific size to use to achieve a given height in pixels. More... | |

| static void | HoughLinesWithAccumulator (Mat image, Mat lines, double rho, double theta, int threshold, double srn, double stn, double min_theta, double max_theta) |

| Finds lines in a binary image using the standard Hough transform and get accumulator. More... | |

| static void | HoughLinesWithAccumulator (Mat image, Mat lines, double rho, double theta, int threshold, double srn, double stn, double min_theta) |

| Finds lines in a binary image using the standard Hough transform and get accumulator. More... | |

| static void | HoughLinesWithAccumulator (Mat image, Mat lines, double rho, double theta, int threshold, double srn, double stn) |

| Finds lines in a binary image using the standard Hough transform and get accumulator. More... | |

| static void | HoughLinesWithAccumulator (Mat image, Mat lines, double rho, double theta, int threshold, double srn) |

| Finds lines in a binary image using the standard Hough transform and get accumulator. More... | |

| static void | HoughLinesWithAccumulator (Mat image, Mat lines, double rho, double theta, int threshold) |

| Finds lines in a binary image using the standard Hough transform and get accumulator. More... | |

| static Size | getTextSize (string text, int fontFace, double fontScale, int thickness, int[] baseLine) |

Member Function Documentation

◆ accumulate() [1/2]

Adds an image to the accumulator image.

The function adds src or some of its elements to dst :

\[\texttt{dst} (x,y) \leftarrow \texttt{dst} (x,y) + \texttt{src} (x,y) \quad \text{if} \quad \texttt{mask} (x,y) \ne 0\]

The function supports multi-channel images. Each channel is processed independently.

The function cv::accumulate can be used, for example, to collect statistics of a scene background viewed by a still camera and for the further foreground-background segmentation.

- Parameters

-

src Input image of type CV_8UC(n), CV_16UC(n), CV_32FC(n) or CV_64FC(n), where n is a positive integer. dst Accumulator image with the same number of channels as input image, and a depth of CV_32F or CV_64F. mask Optional operation mask.

◆ accumulate() [2/2]

Adds an image to the accumulator image.

The function adds src or some of its elements to dst :

\[\texttt{dst} (x,y) \leftarrow \texttt{dst} (x,y) + \texttt{src} (x,y) \quad \text{if} \quad \texttt{mask} (x,y) \ne 0\]

The function supports multi-channel images. Each channel is processed independently.

The function cv::accumulate can be used, for example, to collect statistics of a scene background viewed by a still camera and for the further foreground-background segmentation.

- Parameters

-

src Input image of type CV_8UC(n), CV_16UC(n), CV_32FC(n) or CV_64FC(n), where n is a positive integer. dst Accumulator image with the same number of channels as input image, and a depth of CV_32F or CV_64F. mask Optional operation mask.

◆ accumulateProduct() [1/2]

|

static |

Adds the per-element product of two input images to the accumulator image.

The function adds the product of two images or their selected regions to the accumulator dst :

\[\texttt{dst} (x,y) \leftarrow \texttt{dst} (x,y) + \texttt{src1} (x,y) \cdot \texttt{src2} (x,y) \quad \text{if} \quad \texttt{mask} (x,y) \ne 0\]

The function supports multi-channel images. Each channel is processed independently.

- Parameters

-

src1 First input image, 1- or 3-channel, 8-bit or 32-bit floating point. src2 Second input image of the same type and the same size as src1 . dst Accumulator image with the same number of channels as input images, 32-bit or 64-bit floating-point. mask Optional operation mask.

- See also

- accumulate, accumulateSquare, accumulateWeighted

◆ accumulateProduct() [2/2]

|

static |

Adds the per-element product of two input images to the accumulator image.

The function adds the product of two images or their selected regions to the accumulator dst :

\[\texttt{dst} (x,y) \leftarrow \texttt{dst} (x,y) + \texttt{src1} (x,y) \cdot \texttt{src2} (x,y) \quad \text{if} \quad \texttt{mask} (x,y) \ne 0\]

The function supports multi-channel images. Each channel is processed independently.

- Parameters

-

src1 First input image, 1- or 3-channel, 8-bit or 32-bit floating point. src2 Second input image of the same type and the same size as src1 . dst Accumulator image with the same number of channels as input images, 32-bit or 64-bit floating-point. mask Optional operation mask.

- See also

- accumulate, accumulateSquare, accumulateWeighted

◆ accumulateSquare() [1/2]

|

static |

Adds the square of a source image to the accumulator image.

The function adds the input image src or its selected region, raised to a power of 2, to the accumulator dst :

\[\texttt{dst} (x,y) \leftarrow \texttt{dst} (x,y) + \texttt{src} (x,y)^2 \quad \text{if} \quad \texttt{mask} (x,y) \ne 0\]

The function supports multi-channel images. Each channel is processed independently.

- Parameters

-

src Input image as 1- or 3-channel, 8-bit or 32-bit floating point. dst Accumulator image with the same number of channels as input image, 32-bit or 64-bit floating-point. mask Optional operation mask.

◆ accumulateSquare() [2/2]

Adds the square of a source image to the accumulator image.

The function adds the input image src or its selected region, raised to a power of 2, to the accumulator dst :

\[\texttt{dst} (x,y) \leftarrow \texttt{dst} (x,y) + \texttt{src} (x,y)^2 \quad \text{if} \quad \texttt{mask} (x,y) \ne 0\]

The function supports multi-channel images. Each channel is processed independently.

- Parameters

-

src Input image as 1- or 3-channel, 8-bit or 32-bit floating point. dst Accumulator image with the same number of channels as input image, 32-bit or 64-bit floating-point. mask Optional operation mask.

◆ accumulateWeighted() [1/2]

|

static |

Updates a running average.

The function calculates the weighted sum of the input image src and the accumulator dst so that dst becomes a running average of a frame sequence:

\[\texttt{dst} (x,y) \leftarrow (1- \texttt{alpha} ) \cdot \texttt{dst} (x,y) + \texttt{alpha} \cdot \texttt{src} (x,y) \quad \text{if} \quad \texttt{mask} (x,y) \ne 0\]

That is, alpha regulates the update speed (how fast the accumulator "forgets" about earlier images). The function supports multi-channel images. Each channel is processed independently.

- Parameters

-

src Input image as 1- or 3-channel, 8-bit or 32-bit floating point. dst Accumulator image with the same number of channels as input image, 32-bit or 64-bit floating-point. alpha Weight of the input image. mask Optional operation mask.

- See also

- accumulate, accumulateSquare, accumulateProduct

◆ accumulateWeighted() [2/2]

|

static |

Updates a running average.

The function calculates the weighted sum of the input image src and the accumulator dst so that dst becomes a running average of a frame sequence:

\[\texttt{dst} (x,y) \leftarrow (1- \texttt{alpha} ) \cdot \texttt{dst} (x,y) + \texttt{alpha} \cdot \texttt{src} (x,y) \quad \text{if} \quad \texttt{mask} (x,y) \ne 0\]

That is, alpha regulates the update speed (how fast the accumulator "forgets" about earlier images). The function supports multi-channel images. Each channel is processed independently.

- Parameters

-

src Input image as 1- or 3-channel, 8-bit or 32-bit floating point. dst Accumulator image with the same number of channels as input image, 32-bit or 64-bit floating-point. alpha Weight of the input image. mask Optional operation mask.

- See also

- accumulate, accumulateSquare, accumulateProduct

◆ adaptiveThreshold()

|

static |

Applies an adaptive threshold to an array.

The function transforms a grayscale image to a binary image according to the formulae:

- THRESH_BINARY

\[dst(x,y) = \fork{\texttt{maxValue}}{if \(src(x,y) > T(x,y)\)}{0}{otherwise}\]

- THRESH_BINARY_INV

\[dst(x,y) = \fork{0}{if \(src(x,y) > T(x,y)\)}{\texttt{maxValue}}{otherwise}\]

where \(T(x,y)\) is a threshold calculated individually for each pixel (see adaptiveMethod parameter).

The function can process the image in-place.

- Parameters

-

src Source 8-bit single-channel image. dst Destination image of the same size and the same type as src. maxValue Non-zero value assigned to the pixels for which the condition is satisfied adaptiveMethod Adaptive thresholding algorithm to use, see #AdaptiveThresholdTypes. The #BORDER_REPLICATE | #BORDER_ISOLATED is used to process boundaries. thresholdType Thresholding type that must be either THRESH_BINARY or THRESH_BINARY_INV, see #ThresholdTypes. blockSize Size of a pixel neighborhood that is used to calculate a threshold value for the pixel: 3, 5, 7, and so on. C Constant subtracted from the mean or weighted mean (see the details below). Normally, it is positive but may be zero or negative as well.

- See also

- threshold, blur, GaussianBlur

◆ applyColorMap() [1/2]

|

static |

Applies a GNU Octave/MATLAB equivalent colormap on a given image.

- Parameters

-

src The source image, grayscale or colored of type CV_8UC1 or CV_8UC3. If CV_8UC3, then the CV_8UC1 image is generated internally using cv::COLOR_BGR2GRAY. dst The result is the colormapped source image. Note: Mat::create is called on dst. colormap The colormap to apply, see #ColormapTypes

◆ applyColorMap() [2/2]

|

static |

Applies a user colormap on a given image.

- Parameters

-

src The source image, grayscale or colored of type CV_8UC1 or CV_8UC3. If CV_8UC3, then the CV_8UC1 image is generated internally using cv::COLOR_BGR2GRAY. dst The result is the colormapped source image of the same number of channels as userColor. Note: Mat::create is called on dst. userColor The colormap to apply of type CV_8UC1 or CV_8UC3 and size 256

◆ approxPolyDP()

|

static |

Approximates a polygonal curve(s) with the specified precision.

The function cv::approxPolyDP approximates a curve or a polygon with another curve/polygon with less vertices so that the distance between them is less or equal to the specified precision. It uses the Douglas-Peucker algorithm <http://en.wikipedia.org/wiki/Ramer-Douglas-Peucker_algorithm>

- Parameters

-

curve Input vector of a 2D point stored in std::vector or Mat approxCurve Result of the approximation. The type should match the type of the input curve. epsilon Parameter specifying the approximation accuracy. This is the maximum distance between the original curve and its approximation. closed If true, the approximated curve is closed (its first and last vertices are connected). Otherwise, it is not closed.

◆ arcLength()

|

static |

Calculates a contour perimeter or a curve length.

The function computes a curve length or a closed contour perimeter.

- Parameters

-

curve Input vector of 2D points, stored in std::vector or Mat. closed Flag indicating whether the curve is closed or not.

◆ arrowedLine() [1/5]

|

static |

Draws an arrow segment pointing from the first point to the second one.

The function cv::arrowedLine draws an arrow between pt1 and pt2 points in the image. See also line.

- Parameters

-

img Image. pt1 The point the arrow starts from. pt2 The point the arrow points to. color Line color. thickness Line thickness. line_type Type of the line. See #LineTypes shift Number of fractional bits in the point coordinates. tipLength The length of the arrow tip in relation to the arrow length

◆ arrowedLine() [2/5]

|

static |

Draws an arrow segment pointing from the first point to the second one.

The function cv::arrowedLine draws an arrow between pt1 and pt2 points in the image. See also line.

- Parameters

-

img Image. pt1 The point the arrow starts from. pt2 The point the arrow points to. color Line color. thickness Line thickness. line_type Type of the line. See #LineTypes shift Number of fractional bits in the point coordinates. tipLength The length of the arrow tip in relation to the arrow length

◆ arrowedLine() [3/5]

|

static |

Draws an arrow segment pointing from the first point to the second one.

The function cv::arrowedLine draws an arrow between pt1 and pt2 points in the image. See also line.

- Parameters

-

img Image. pt1 The point the arrow starts from. pt2 The point the arrow points to. color Line color. thickness Line thickness. line_type Type of the line. See #LineTypes shift Number of fractional bits in the point coordinates. tipLength The length of the arrow tip in relation to the arrow length

◆ arrowedLine() [4/5]

|

static |

Draws an arrow segment pointing from the first point to the second one.

The function cv::arrowedLine draws an arrow between pt1 and pt2 points in the image. See also line.

- Parameters

-

img Image. pt1 The point the arrow starts from. pt2 The point the arrow points to. color Line color. thickness Line thickness. line_type Type of the line. See #LineTypes shift Number of fractional bits in the point coordinates. tipLength The length of the arrow tip in relation to the arrow length

◆ arrowedLine() [5/5]

|

static |

Draws an arrow segment pointing from the first point to the second one.

The function cv::arrowedLine draws an arrow between pt1 and pt2 points in the image. See also line.

- Parameters

-

img Image. pt1 The point the arrow starts from. pt2 The point the arrow points to. color Line color. thickness Line thickness. line_type Type of the line. See #LineTypes shift Number of fractional bits in the point coordinates. tipLength The length of the arrow tip in relation to the arrow length

◆ bilateralFilter() [1/2]

|

static |

Applies the bilateral filter to an image.

The function applies bilateral filtering to the input image, as described in http://www.dai.ed.ac.uk/CVonline/LOCAL_COPIES/MANDUCHI1/Bilateral_Filtering.html bilateralFilter can reduce unwanted noise very well while keeping edges fairly sharp. However, it is very slow compared to most filters.

Sigma values: For simplicity, you can set the 2 sigma values to be the same. If they are small (< 10), the filter will not have much effect, whereas if they are large (> 150), they will have a very strong effect, making the image look "cartoonish".

Filter size: Large filters (d > 5) are very slow, so it is recommended to use d=5 for real-time applications, and perhaps d=9 for offline applications that need heavy noise filtering.

This filter does not work inplace.

- Parameters

-

src Source 8-bit or floating-point, 1-channel or 3-channel image. dst Destination image of the same size and type as src . d Diameter of each pixel neighborhood that is used during filtering. If it is non-positive, it is computed from sigmaSpace. sigmaColor Filter sigma in the color space. A larger value of the parameter means that farther colors within the pixel neighborhood (see sigmaSpace) will be mixed together, resulting in larger areas of semi-equal color. sigmaSpace Filter sigma in the coordinate space. A larger value of the parameter means that farther pixels will influence each other as long as their colors are close enough (see sigmaColor ). When d>0, it specifies the neighborhood size regardless of sigmaSpace. Otherwise, d is proportional to sigmaSpace. borderType border mode used to extrapolate pixels outside of the image, see #BorderTypes

◆ bilateralFilter() [2/2]

|

static |

Applies the bilateral filter to an image.

The function applies bilateral filtering to the input image, as described in http://www.dai.ed.ac.uk/CVonline/LOCAL_COPIES/MANDUCHI1/Bilateral_Filtering.html bilateralFilter can reduce unwanted noise very well while keeping edges fairly sharp. However, it is very slow compared to most filters.

Sigma values: For simplicity, you can set the 2 sigma values to be the same. If they are small (< 10), the filter will not have much effect, whereas if they are large (> 150), they will have a very strong effect, making the image look "cartoonish".

Filter size: Large filters (d > 5) are very slow, so it is recommended to use d=5 for real-time applications, and perhaps d=9 for offline applications that need heavy noise filtering.

This filter does not work inplace.

- Parameters

-

src Source 8-bit or floating-point, 1-channel or 3-channel image. dst Destination image of the same size and type as src . d Diameter of each pixel neighborhood that is used during filtering. If it is non-positive, it is computed from sigmaSpace. sigmaColor Filter sigma in the color space. A larger value of the parameter means that farther colors within the pixel neighborhood (see sigmaSpace) will be mixed together, resulting in larger areas of semi-equal color. sigmaSpace Filter sigma in the coordinate space. A larger value of the parameter means that farther pixels will influence each other as long as their colors are close enough (see sigmaColor ). When d>0, it specifies the neighborhood size regardless of sigmaSpace. Otherwise, d is proportional to sigmaSpace. borderType border mode used to extrapolate pixels outside of the image, see #BorderTypes

◆ blendLinear()

|

static |

This is an overloaded member function, provided for convenience. It differs from the above function only in what argument(s) it accepts.

variant without mask parameter

◆ blur() [1/3]

|

static |

Blurs an image using the normalized box filter.

The function smooths an image using the kernel:

\[\texttt{K} = \frac{1}{\texttt{ksize.width*ksize.height}} \begin{bmatrix} 1 & 1 & 1 & \cdots & 1 & 1 \\ 1 & 1 & 1 & \cdots & 1 & 1 \\ \hdotsfor{6} \\ 1 & 1 & 1 & \cdots & 1 & 1 \\ \end{bmatrix}\]

The call blur(src, dst, ksize, anchor, borderType) is equivalent to boxFilter(src, dst, src.type(), ksize, anchor, true, borderType).

- Parameters

-

src input image; it can have any number of channels, which are processed independently, but the depth should be CV_8U, CV_16U, CV_16S, CV_32F or CV_64F. dst output image of the same size and type as src. ksize blurring kernel size. anchor anchor point; default value Point(-1,-1) means that the anchor is at the kernel center. borderType border mode used to extrapolate pixels outside of the image, see #BorderTypes. #BORDER_WRAP is not supported.

- See also

- boxFilter, bilateralFilter, GaussianBlur, medianBlur

◆ blur() [2/3]

|

static |

Blurs an image using the normalized box filter.

The function smooths an image using the kernel:

\[\texttt{K} = \frac{1}{\texttt{ksize.width*ksize.height}} \begin{bmatrix} 1 & 1 & 1 & \cdots & 1 & 1 \\ 1 & 1 & 1 & \cdots & 1 & 1 \\ \hdotsfor{6} \\ 1 & 1 & 1 & \cdots & 1 & 1 \\ \end{bmatrix}\]

The call blur(src, dst, ksize, anchor, borderType) is equivalent to boxFilter(src, dst, src.type(), ksize, anchor, true, borderType).

- Parameters

-

src input image; it can have any number of channels, which are processed independently, but the depth should be CV_8U, CV_16U, CV_16S, CV_32F or CV_64F. dst output image of the same size and type as src. ksize blurring kernel size. anchor anchor point; default value Point(-1,-1) means that the anchor is at the kernel center. borderType border mode used to extrapolate pixels outside of the image, see #BorderTypes. #BORDER_WRAP is not supported.

- See also

- boxFilter, bilateralFilter, GaussianBlur, medianBlur

◆ blur() [3/3]

Blurs an image using the normalized box filter.

The function smooths an image using the kernel:

\[\texttt{K} = \frac{1}{\texttt{ksize.width*ksize.height}} \begin{bmatrix} 1 & 1 & 1 & \cdots & 1 & 1 \\ 1 & 1 & 1 & \cdots & 1 & 1 \\ \hdotsfor{6} \\ 1 & 1 & 1 & \cdots & 1 & 1 \\ \end{bmatrix}\]

The call blur(src, dst, ksize, anchor, borderType) is equivalent to boxFilter(src, dst, src.type(), ksize, anchor, true, borderType).

- Parameters

-

src input image; it can have any number of channels, which are processed independently, but the depth should be CV_8U, CV_16U, CV_16S, CV_32F or CV_64F. dst output image of the same size and type as src. ksize blurring kernel size. anchor anchor point; default value Point(-1,-1) means that the anchor is at the kernel center. borderType border mode used to extrapolate pixels outside of the image, see #BorderTypes. #BORDER_WRAP is not supported.

- See also

- boxFilter, bilateralFilter, GaussianBlur, medianBlur

◆ boundingRect()

Calculates the up-right bounding rectangle of a point set or non-zero pixels of gray-scale image.

The function calculates and returns the minimal up-right bounding rectangle for the specified point set or non-zero pixels of gray-scale image.

- Parameters

-

array Input gray-scale image or 2D point set, stored in std::vector or Mat.

◆ boxFilter() [1/4]

|

static |

Blurs an image using the box filter.

The function smooths an image using the kernel:

\[\texttt{K} = \alpha \begin{bmatrix} 1 & 1 & 1 & \cdots & 1 & 1 \\ 1 & 1 & 1 & \cdots & 1 & 1 \\ \hdotsfor{6} \\ 1 & 1 & 1 & \cdots & 1 & 1 \end{bmatrix}\]

where

\[\alpha = \begin{cases} \frac{1}{\texttt{ksize.width*ksize.height}} & \texttt{when } \texttt{normalize=true} \\1 & \texttt{otherwise}\end{cases}\]

Unnormalized box filter is useful for computing various integral characteristics over each pixel neighborhood, such as covariance matrices of image derivatives (used in dense optical flow algorithms, and so on). If you need to compute pixel sums over variable-size windows, use integral.

- Parameters

-

src input image. dst output image of the same size and type as src. ddepth the output image depth (-1 to use src.depth()). ksize blurring kernel size. anchor anchor point; default value Point(-1,-1) means that the anchor is at the kernel center. normalize flag, specifying whether the kernel is normalized by its area or not. borderType border mode used to extrapolate pixels outside of the image, see #BorderTypes. #BORDER_WRAP is not supported.

- See also

- blur, bilateralFilter, GaussianBlur, medianBlur, integral

◆ boxFilter() [2/4]

|

static |

Blurs an image using the box filter.

The function smooths an image using the kernel:

\[\texttt{K} = \alpha \begin{bmatrix} 1 & 1 & 1 & \cdots & 1 & 1 \\ 1 & 1 & 1 & \cdots & 1 & 1 \\ \hdotsfor{6} \\ 1 & 1 & 1 & \cdots & 1 & 1 \end{bmatrix}\]

where

\[\alpha = \begin{cases} \frac{1}{\texttt{ksize.width*ksize.height}} & \texttt{when } \texttt{normalize=true} \\1 & \texttt{otherwise}\end{cases}\]

Unnormalized box filter is useful for computing various integral characteristics over each pixel neighborhood, such as covariance matrices of image derivatives (used in dense optical flow algorithms, and so on). If you need to compute pixel sums over variable-size windows, use integral.

- Parameters

-

src input image. dst output image of the same size and type as src. ddepth the output image depth (-1 to use src.depth()). ksize blurring kernel size. anchor anchor point; default value Point(-1,-1) means that the anchor is at the kernel center. normalize flag, specifying whether the kernel is normalized by its area or not. borderType border mode used to extrapolate pixels outside of the image, see #BorderTypes. #BORDER_WRAP is not supported.

- See also

- blur, bilateralFilter, GaussianBlur, medianBlur, integral

◆ boxFilter() [3/4]

|

static |

Blurs an image using the box filter.

The function smooths an image using the kernel:

\[\texttt{K} = \alpha \begin{bmatrix} 1 & 1 & 1 & \cdots & 1 & 1 \\ 1 & 1 & 1 & \cdots & 1 & 1 \\ \hdotsfor{6} \\ 1 & 1 & 1 & \cdots & 1 & 1 \end{bmatrix}\]

where

\[\alpha = \begin{cases} \frac{1}{\texttt{ksize.width*ksize.height}} & \texttt{when } \texttt{normalize=true} \\1 & \texttt{otherwise}\end{cases}\]

Unnormalized box filter is useful for computing various integral characteristics over each pixel neighborhood, such as covariance matrices of image derivatives (used in dense optical flow algorithms, and so on). If you need to compute pixel sums over variable-size windows, use integral.

- Parameters

-

src input image. dst output image of the same size and type as src. ddepth the output image depth (-1 to use src.depth()). ksize blurring kernel size. anchor anchor point; default value Point(-1,-1) means that the anchor is at the kernel center. normalize flag, specifying whether the kernel is normalized by its area or not. borderType border mode used to extrapolate pixels outside of the image, see #BorderTypes. #BORDER_WRAP is not supported.

- See also

- blur, bilateralFilter, GaussianBlur, medianBlur, integral

◆ boxFilter() [4/4]

|

static |

Blurs an image using the box filter.

The function smooths an image using the kernel:

\[\texttt{K} = \alpha \begin{bmatrix} 1 & 1 & 1 & \cdots & 1 & 1 \\ 1 & 1 & 1 & \cdots & 1 & 1 \\ \hdotsfor{6} \\ 1 & 1 & 1 & \cdots & 1 & 1 \end{bmatrix}\]

where

\[\alpha = \begin{cases} \frac{1}{\texttt{ksize.width*ksize.height}} & \texttt{when } \texttt{normalize=true} \\1 & \texttt{otherwise}\end{cases}\]

Unnormalized box filter is useful for computing various integral characteristics over each pixel neighborhood, such as covariance matrices of image derivatives (used in dense optical flow algorithms, and so on). If you need to compute pixel sums over variable-size windows, use integral.

- Parameters

-

src input image. dst output image of the same size and type as src. ddepth the output image depth (-1 to use src.depth()). ksize blurring kernel size. anchor anchor point; default value Point(-1,-1) means that the anchor is at the kernel center. normalize flag, specifying whether the kernel is normalized by its area or not. borderType border mode used to extrapolate pixels outside of the image, see #BorderTypes. #BORDER_WRAP is not supported.

- See also

- blur, bilateralFilter, GaussianBlur, medianBlur, integral

◆ boxPoints()

|

static |

Finds the four vertices of a rotated rect. Useful to draw the rotated rectangle.

The function finds the four vertices of a rotated rectangle. This function is useful to draw the rectangle. In C++, instead of using this function, you can directly use RotatedRect::points method. Please visit the tutorial on Creating Bounding rotated boxes and ellipses for contours for more information.

- Parameters

-

box The input rotated rectangle. It may be the output of minAreaRect. points The output array of four vertices of rectangles.

◆ calcBackProject()

|

static |

This is an overloaded member function, provided for convenience. It differs from the above function only in what argument(s) it accepts.

◆ calcHist() [1/2]

|

static |

This is an overloaded member function, provided for convenience. It differs from the above function only in what argument(s) it accepts.

this variant supports only uniform histograms.