Static Public Member Functions | |

| static double | calibrateCamera (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs) |

| static double | calibrateCamera (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, int flags) |

| static double | calibrateCamera (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, int flags, in Vec3d criteria) |

| static double | calibrateCamera (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs) |

| static double | calibrateCamera (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, int flags) |

| static double | calibrateCamera (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, int flags, in(double type, double maxCount, double epsilon) criteria) |

| static double | calibrateCamera (List< Mat > objectPoints, List< Mat > imagePoints, Size imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs) |

| static double | calibrateCamera (List< Mat > objectPoints, List< Mat > imagePoints, Size imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, int flags) |

| static double | calibrateCamera (List< Mat > objectPoints, List< Mat > imagePoints, Size imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, int flags, TermCriteria criteria) |

| static double | calibrateCameraExtended (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat perViewErrors) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraExtended (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat perViewErrors, int flags) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraExtended (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat perViewErrors, int flags, in Vec3d criteria) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraExtended (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat perViewErrors) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraExtended (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat perViewErrors, int flags) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraExtended (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat perViewErrors, int flags, in(double type, double maxCount, double epsilon) criteria) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraExtended (List< Mat > objectPoints, List< Mat > imagePoints, Size imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat perViewErrors) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraExtended (List< Mat > objectPoints, List< Mat > imagePoints, Size imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat perViewErrors, int flags) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraExtended (List< Mat > objectPoints, List< Mat > imagePoints, Size imageSize, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat perViewErrors, int flags, TermCriteria criteria) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraRO (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints) |

| static double | calibrateCameraRO (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, int flags) |

| static double | calibrateCameraRO (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, int flags, in Vec3d criteria) |

| static double | calibrateCameraRO (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints) |

| static double | calibrateCameraRO (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, int flags) |

| static double | calibrateCameraRO (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, int flags, in(double type, double maxCount, double epsilon) criteria) |

| static double | calibrateCameraRO (List< Mat > objectPoints, List< Mat > imagePoints, Size imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints) |

| static double | calibrateCameraRO (List< Mat > objectPoints, List< Mat > imagePoints, Size imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, int flags) |

| static double | calibrateCameraRO (List< Mat > objectPoints, List< Mat > imagePoints, Size imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, int flags, TermCriteria criteria) |

| static double | calibrateCameraROExtended (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat stdDeviationsObjPoints, Mat perViewErrors) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraROExtended (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat stdDeviationsObjPoints, Mat perViewErrors, int flags) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraROExtended (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat stdDeviationsObjPoints, Mat perViewErrors, int flags, in Vec3d criteria) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraROExtended (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat stdDeviationsObjPoints, Mat perViewErrors) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraROExtended (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat stdDeviationsObjPoints, Mat perViewErrors, int flags) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraROExtended (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat stdDeviationsObjPoints, Mat perViewErrors, int flags, in(double type, double maxCount, double epsilon) criteria) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraROExtended (List< Mat > objectPoints, List< Mat > imagePoints, Size imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat stdDeviationsObjPoints, Mat perViewErrors) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraROExtended (List< Mat > objectPoints, List< Mat > imagePoints, Size imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat stdDeviationsObjPoints, Mat perViewErrors, int flags) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static double | calibrateCameraROExtended (List< Mat > objectPoints, List< Mat > imagePoints, Size imageSize, int iFixedPoint, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, Mat newObjPoints, Mat stdDeviationsIntrinsics, Mat stdDeviationsExtrinsics, Mat stdDeviationsObjPoints, Mat perViewErrors, int flags, TermCriteria criteria) |

| Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. | |

| static void | calibrateHandEye (List< Mat > R_gripper2base, List< Mat > t_gripper2base, List< Mat > R_target2cam, List< Mat > t_target2cam, Mat R_cam2gripper, Mat t_cam2gripper) |

| Computes Hand-Eye calibration: \(_{}^{g}\textrm{T}_c\). | |

| static void | calibrateHandEye (List< Mat > R_gripper2base, List< Mat > t_gripper2base, List< Mat > R_target2cam, List< Mat > t_target2cam, Mat R_cam2gripper, Mat t_cam2gripper, int method) |

| Computes Hand-Eye calibration: \(_{}^{g}\textrm{T}_c\). | |

| static void | calibrateRobotWorldHandEye (List< Mat > R_world2cam, List< Mat > t_world2cam, List< Mat > R_base2gripper, List< Mat > t_base2gripper, Mat R_base2world, Mat t_base2world, Mat R_gripper2cam, Mat t_gripper2cam) |

| Computes Robot-World/Hand-Eye calibration: \(_{}^{w}\textrm{T}_b\) and \(_{}^{c}\textrm{T}_g\). | |

| static void | calibrateRobotWorldHandEye (List< Mat > R_world2cam, List< Mat > t_world2cam, List< Mat > R_base2gripper, List< Mat > t_base2gripper, Mat R_base2world, Mat t_base2world, Mat R_gripper2cam, Mat t_gripper2cam, int method) |

| Computes Robot-World/Hand-Eye calibration: \(_{}^{w}\textrm{T}_b\) and \(_{}^{c}\textrm{T}_g\). | |

| static void | calibrationMatrixValues (Mat cameraMatrix, in Vec2d imageSize, double apertureWidth, double apertureHeight, double[] fovx, double[] fovy, double[] focalLength, out Vec2d principalPoint, double[] aspectRatio) |

| Computes useful camera characteristics from the camera intrinsic matrix. | |

| static void | calibrationMatrixValues (Mat cameraMatrix, in(double width, double height) imageSize, double apertureWidth, double apertureHeight, double[] fovx, double[] fovy, double[] focalLength, out(double x, double y) principalPoint, double[] aspectRatio) |

| Computes useful camera characteristics from the camera intrinsic matrix. | |

| static void | calibrationMatrixValues (Mat cameraMatrix, Size imageSize, double apertureWidth, double apertureHeight, double[] fovx, double[] fovy, double[] focalLength, Point principalPoint, double[] aspectRatio) |

| Computes useful camera characteristics from the camera intrinsic matrix. | |

| static bool | checkChessboard (Mat img, in Vec2d size) |

| static bool | checkChessboard (Mat img, in(double width, double height) size) |

| static bool | checkChessboard (Mat img, Size size) |

| static void | composeRT (Mat rvec1, Mat tvec1, Mat rvec2, Mat tvec2, Mat rvec3, Mat tvec3) |

| Combines two rotation-and-shift transformations. | |

| static void | composeRT (Mat rvec1, Mat tvec1, Mat rvec2, Mat tvec2, Mat rvec3, Mat tvec3, Mat dr3dr1) |

| Combines two rotation-and-shift transformations. | |

| static void | composeRT (Mat rvec1, Mat tvec1, Mat rvec2, Mat tvec2, Mat rvec3, Mat tvec3, Mat dr3dr1, Mat dr3dt1) |

| Combines two rotation-and-shift transformations. | |

| static void | composeRT (Mat rvec1, Mat tvec1, Mat rvec2, Mat tvec2, Mat rvec3, Mat tvec3, Mat dr3dr1, Mat dr3dt1, Mat dr3dr2) |

| Combines two rotation-and-shift transformations. | |

| static void | composeRT (Mat rvec1, Mat tvec1, Mat rvec2, Mat tvec2, Mat rvec3, Mat tvec3, Mat dr3dr1, Mat dr3dt1, Mat dr3dr2, Mat dr3dt2) |

| Combines two rotation-and-shift transformations. | |

| static void | composeRT (Mat rvec1, Mat tvec1, Mat rvec2, Mat tvec2, Mat rvec3, Mat tvec3, Mat dr3dr1, Mat dr3dt1, Mat dr3dr2, Mat dr3dt2, Mat dt3dr1) |

| Combines two rotation-and-shift transformations. | |

| static void | composeRT (Mat rvec1, Mat tvec1, Mat rvec2, Mat tvec2, Mat rvec3, Mat tvec3, Mat dr3dr1, Mat dr3dt1, Mat dr3dr2, Mat dr3dt2, Mat dt3dr1, Mat dt3dt1) |

| Combines two rotation-and-shift transformations. | |

| static void | composeRT (Mat rvec1, Mat tvec1, Mat rvec2, Mat tvec2, Mat rvec3, Mat tvec3, Mat dr3dr1, Mat dr3dt1, Mat dr3dr2, Mat dr3dt2, Mat dt3dr1, Mat dt3dt1, Mat dt3dr2) |

| Combines two rotation-and-shift transformations. | |

| static void | composeRT (Mat rvec1, Mat tvec1, Mat rvec2, Mat tvec2, Mat rvec3, Mat tvec3, Mat dr3dr1, Mat dr3dt1, Mat dr3dr2, Mat dr3dt2, Mat dt3dr1, Mat dt3dt1, Mat dt3dr2, Mat dt3dt2) |

| Combines two rotation-and-shift transformations. | |

| static void | computeCorrespondEpilines (Mat points, int whichImage, Mat F, Mat lines) |

| For points in an image of a stereo pair, computes the corresponding epilines in the other image. | |

| static void | convertPointsFromHomogeneous (Mat src, Mat dst) |

| Converts points from homogeneous to Euclidean space. | |

| static void | convertPointsToHomogeneous (Mat src, Mat dst) |

| Converts points from Euclidean to homogeneous space. | |

| static void | correctMatches (Mat F, Mat points1, Mat points2, Mat newPoints1, Mat newPoints2) |

| Refines coordinates of corresponding points. | |

| static void | decomposeEssentialMat (Mat E, Mat R1, Mat R2, Mat t) |

| Decompose an essential matrix to possible rotations and translation. | |

| static int | decomposeHomographyMat (Mat H, Mat K, List< Mat > rotations, List< Mat > translations, List< Mat > normals) |

| Decompose a homography matrix to rotation(s), translation(s) and plane normal(s). | |

| static void | decomposeProjectionMatrix (Mat projMatrix, Mat cameraMatrix, Mat rotMatrix, Mat transVect) |

| Decomposes a projection matrix into a rotation matrix and a camera intrinsic matrix. | |

| static void | decomposeProjectionMatrix (Mat projMatrix, Mat cameraMatrix, Mat rotMatrix, Mat transVect, Mat rotMatrixX) |

| Decomposes a projection matrix into a rotation matrix and a camera intrinsic matrix. | |

| static void | decomposeProjectionMatrix (Mat projMatrix, Mat cameraMatrix, Mat rotMatrix, Mat transVect, Mat rotMatrixX, Mat rotMatrixY) |

| Decomposes a projection matrix into a rotation matrix and a camera intrinsic matrix. | |

| static void | decomposeProjectionMatrix (Mat projMatrix, Mat cameraMatrix, Mat rotMatrix, Mat transVect, Mat rotMatrixX, Mat rotMatrixY, Mat rotMatrixZ) |

| Decomposes a projection matrix into a rotation matrix and a camera intrinsic matrix. | |

| static void | decomposeProjectionMatrix (Mat projMatrix, Mat cameraMatrix, Mat rotMatrix, Mat transVect, Mat rotMatrixX, Mat rotMatrixY, Mat rotMatrixZ, Mat eulerAngles) |

| Decomposes a projection matrix into a rotation matrix and a camera intrinsic matrix. | |

| static void | drawChessboardCorners (Mat image, in Vec2d patternSize, MatOfPoint2f corners, bool patternWasFound) |

| Renders the detected chessboard corners. | |

| static void | drawChessboardCorners (Mat image, in(double width, double height) patternSize, MatOfPoint2f corners, bool patternWasFound) |

| Renders the detected chessboard corners. | |

| static void | drawChessboardCorners (Mat image, Size patternSize, MatOfPoint2f corners, bool patternWasFound) |

| Renders the detected chessboard corners. | |

| static void | drawFrameAxes (Mat image, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, float length) |

| Draw axes of the world/object coordinate system from pose estimation. | |

| static void | drawFrameAxes (Mat image, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, float length, int thickness) |

| Draw axes of the world/object coordinate system from pose estimation. | |

| static Mat | estimateAffine2D (Mat from, Mat to) |

| Computes an optimal affine transformation between two 2D point sets. | |

| static Mat | estimateAffine2D (Mat from, Mat to, Mat inliers) |

| Computes an optimal affine transformation between two 2D point sets. | |

| static Mat | estimateAffine2D (Mat from, Mat to, Mat inliers, int method) |

| Computes an optimal affine transformation between two 2D point sets. | |

| static Mat | estimateAffine2D (Mat from, Mat to, Mat inliers, int method, double ransacReprojThreshold) |

| Computes an optimal affine transformation between two 2D point sets. | |

| static Mat | estimateAffine2D (Mat from, Mat to, Mat inliers, int method, double ransacReprojThreshold, long maxIters) |

| Computes an optimal affine transformation between two 2D point sets. | |

| static Mat | estimateAffine2D (Mat from, Mat to, Mat inliers, int method, double ransacReprojThreshold, long maxIters, double confidence) |

| Computes an optimal affine transformation between two 2D point sets. | |

| static Mat | estimateAffine2D (Mat from, Mat to, Mat inliers, int method, double ransacReprojThreshold, long maxIters, double confidence, long refineIters) |

| Computes an optimal affine transformation between two 2D point sets. | |

| static Mat | estimateAffine2D (Mat pts1, Mat pts2, Mat inliers, UsacParams _params) |

| static Mat | estimateAffine3D (Mat src, Mat dst) |

| Computes an optimal affine transformation between two 3D point sets. | |

| static Mat | estimateAffine3D (Mat src, Mat dst, double[] scale) |

| Computes an optimal affine transformation between two 3D point sets. | |

| static Mat | estimateAffine3D (Mat src, Mat dst, double[] scale, bool force_rotation) |

| Computes an optimal affine transformation between two 3D point sets. | |

| static int | estimateAffine3D (Mat src, Mat dst, Mat _out, Mat inliers) |

| Computes an optimal affine transformation between two 3D point sets. | |

| static int | estimateAffine3D (Mat src, Mat dst, Mat _out, Mat inliers, double ransacThreshold) |

| Computes an optimal affine transformation between two 3D point sets. | |

| static int | estimateAffine3D (Mat src, Mat dst, Mat _out, Mat inliers, double ransacThreshold, double confidence) |

| Computes an optimal affine transformation between two 3D point sets. | |

| static Mat | estimateAffinePartial2D (Mat from, Mat to) |

| Computes an optimal limited affine transformation with 4 degrees of freedom between two 2D point sets. | |

| static Mat | estimateAffinePartial2D (Mat from, Mat to, Mat inliers) |

| Computes an optimal limited affine transformation with 4 degrees of freedom between two 2D point sets. | |

| static Mat | estimateAffinePartial2D (Mat from, Mat to, Mat inliers, int method) |

| Computes an optimal limited affine transformation with 4 degrees of freedom between two 2D point sets. | |

| static Mat | estimateAffinePartial2D (Mat from, Mat to, Mat inliers, int method, double ransacReprojThreshold) |

| Computes an optimal limited affine transformation with 4 degrees of freedom between two 2D point sets. | |

| static Mat | estimateAffinePartial2D (Mat from, Mat to, Mat inliers, int method, double ransacReprojThreshold, long maxIters) |

| Computes an optimal limited affine transformation with 4 degrees of freedom between two 2D point sets. | |

| static Mat | estimateAffinePartial2D (Mat from, Mat to, Mat inliers, int method, double ransacReprojThreshold, long maxIters, double confidence) |

| Computes an optimal limited affine transformation with 4 degrees of freedom between two 2D point sets. | |

| static Mat | estimateAffinePartial2D (Mat from, Mat to, Mat inliers, int method, double ransacReprojThreshold, long maxIters, double confidence, long refineIters) |

| Computes an optimal limited affine transformation with 4 degrees of freedom between two 2D point sets. | |

| static Scalar | estimateChessboardSharpness (Mat image, Size patternSize, Mat corners) |

| Estimates the sharpness of a detected chessboard. | |

| static Scalar | estimateChessboardSharpness (Mat image, Size patternSize, Mat corners, float rise_distance) |

| Estimates the sharpness of a detected chessboard. | |

| static Scalar | estimateChessboardSharpness (Mat image, Size patternSize, Mat corners, float rise_distance, bool vertical) |

| Estimates the sharpness of a detected chessboard. | |

| static Scalar | estimateChessboardSharpness (Mat image, Size patternSize, Mat corners, float rise_distance, bool vertical, Mat sharpness) |

| Estimates the sharpness of a detected chessboard. | |

| static double double double double v3 | estimateChessboardSharpnessAsValueTuple (Mat image, in(double width, double height) patternSize, Mat corners) |

| static double double double double v3 | estimateChessboardSharpnessAsValueTuple (Mat image, in(double width, double height) patternSize, Mat corners, float rise_distance) |

| static double double double double v3 | estimateChessboardSharpnessAsValueTuple (Mat image, in(double width, double height) patternSize, Mat corners, float rise_distance, bool vertical) |

| static double double double double v3 | estimateChessboardSharpnessAsValueTuple (Mat image, in(double width, double height) patternSize, Mat corners, float rise_distance, bool vertical, Mat sharpness) |

| static Vec4d | estimateChessboardSharpnessAsVec4d (Mat image, in Vec2d patternSize, Mat corners) |

| Estimates the sharpness of a detected chessboard. | |

| static Vec4d | estimateChessboardSharpnessAsVec4d (Mat image, in Vec2d patternSize, Mat corners, float rise_distance) |

| Estimates the sharpness of a detected chessboard. | |

| static Vec4d | estimateChessboardSharpnessAsVec4d (Mat image, in Vec2d patternSize, Mat corners, float rise_distance, bool vertical) |

| Estimates the sharpness of a detected chessboard. | |

| static Vec4d | estimateChessboardSharpnessAsVec4d (Mat image, in Vec2d patternSize, Mat corners, float rise_distance, bool vertical, Mat sharpness) |

| Estimates the sharpness of a detected chessboard. | |

| static double[] | estimateTranslation2D (Mat from, Mat to) |

| Computes a pure 2D translation between two 2D point sets. | |

| static double[] | estimateTranslation2D (Mat from, Mat to, Mat inliers) |

| Computes a pure 2D translation between two 2D point sets. | |

| static double[] | estimateTranslation2D (Mat from, Mat to, Mat inliers, int method) |

| Computes a pure 2D translation between two 2D point sets. | |

| static double[] | estimateTranslation2D (Mat from, Mat to, Mat inliers, int method, double ransacReprojThreshold) |

| Computes a pure 2D translation between two 2D point sets. | |

| static double[] | estimateTranslation2D (Mat from, Mat to, Mat inliers, int method, double ransacReprojThreshold, long maxIters) |

| Computes a pure 2D translation between two 2D point sets. | |

| static double[] | estimateTranslation2D (Mat from, Mat to, Mat inliers, int method, double ransacReprojThreshold, long maxIters, double confidence) |

| Computes a pure 2D translation between two 2D point sets. | |

| static double[] | estimateTranslation2D (Mat from, Mat to, Mat inliers, int method, double ransacReprojThreshold, long maxIters, double confidence, long refineIters) |

| Computes a pure 2D translation between two 2D point sets. | |

| static int | estimateTranslation3D (Mat src, Mat dst, Mat _out, Mat inliers) |

| Computes an optimal translation between two 3D point sets. | |

| static int | estimateTranslation3D (Mat src, Mat dst, Mat _out, Mat inliers, double ransacThreshold) |

| Computes an optimal translation between two 3D point sets. | |

| static int | estimateTranslation3D (Mat src, Mat dst, Mat _out, Mat inliers, double ransacThreshold, double confidence) |

| Computes an optimal translation between two 3D point sets. | |

| static void | filterHomographyDecompByVisibleRefpoints (List< Mat > rotations, List< Mat > normals, Mat beforePoints, Mat afterPoints, Mat possibleSolutions) |

| Filters homography decompositions based on additional information. | |

| static void | filterHomographyDecompByVisibleRefpoints (List< Mat > rotations, List< Mat > normals, Mat beforePoints, Mat afterPoints, Mat possibleSolutions, Mat pointsMask) |

| Filters homography decompositions based on additional information. | |

| static void | filterSpeckles (Mat img, double newVal, int maxSpeckleSize, double maxDiff) |

| Filters off small noise blobs (speckles) in the disparity map. | |

| static void | filterSpeckles (Mat img, double newVal, int maxSpeckleSize, double maxDiff, Mat buf) |

| Filters off small noise blobs (speckles) in the disparity map. | |

| static bool | find4QuadCornerSubpix (Mat img, Mat corners, in Vec2d region_size) |

| static bool | find4QuadCornerSubpix (Mat img, Mat corners, in(double width, double height) region_size) |

| static bool | find4QuadCornerSubpix (Mat img, Mat corners, Size region_size) |

| static bool | findChessboardCorners (Mat image, in Vec2d patternSize, MatOfPoint2f corners) |

| Finds the positions of internal corners of the chessboard. | |

| static bool | findChessboardCorners (Mat image, in Vec2d patternSize, MatOfPoint2f corners, int flags) |

| Finds the positions of internal corners of the chessboard. | |

| static bool | findChessboardCorners (Mat image, in(double width, double height) patternSize, MatOfPoint2f corners) |

| Finds the positions of internal corners of the chessboard. | |

| static bool | findChessboardCorners (Mat image, in(double width, double height) patternSize, MatOfPoint2f corners, int flags) |

| Finds the positions of internal corners of the chessboard. | |

| static bool | findChessboardCorners (Mat image, Size patternSize, MatOfPoint2f corners) |

| Finds the positions of internal corners of the chessboard. | |

| static bool | findChessboardCorners (Mat image, Size patternSize, MatOfPoint2f corners, int flags) |

| Finds the positions of internal corners of the chessboard. | |

| static bool | findChessboardCornersSB (Mat image, in Vec2d patternSize, Mat corners) |

| static bool | findChessboardCornersSB (Mat image, in Vec2d patternSize, Mat corners, int flags) |

| static bool | findChessboardCornersSB (Mat image, in(double width, double height) patternSize, Mat corners) |

| static bool | findChessboardCornersSB (Mat image, in(double width, double height) patternSize, Mat corners, int flags) |

| static bool | findChessboardCornersSB (Mat image, Size patternSize, Mat corners) |

| static bool | findChessboardCornersSB (Mat image, Size patternSize, Mat corners, int flags) |

| static bool | findChessboardCornersSBWithMeta (Mat image, in Vec2d patternSize, Mat corners, int flags, Mat meta) |

| Finds the positions of internal corners of the chessboard using a sector based approach. | |

| static bool | findChessboardCornersSBWithMeta (Mat image, in(double width, double height) patternSize, Mat corners, int flags, Mat meta) |

| Finds the positions of internal corners of the chessboard using a sector based approach. | |

| static bool | findChessboardCornersSBWithMeta (Mat image, Size patternSize, Mat corners, int flags, Mat meta) |

| Finds the positions of internal corners of the chessboard using a sector based approach. | |

| static bool | findCirclesGrid (Mat image, in Vec2d patternSize, Mat centers) |

| static bool | findCirclesGrid (Mat image, in Vec2d patternSize, Mat centers, int flags) |

| static bool | findCirclesGrid (Mat image, in(double width, double height) patternSize, Mat centers) |

| static bool | findCirclesGrid (Mat image, in(double width, double height) patternSize, Mat centers, int flags) |

| static bool | findCirclesGrid (Mat image, Size patternSize, Mat centers) |

| static bool | findCirclesGrid (Mat image, Size patternSize, Mat centers, int flags) |

| static Mat | findEssentialMat (Mat points1, Mat points2) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, in Vec2d pp) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, in Vec2d pp, int method) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, in Vec2d pp, int method, double prob) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, in Vec2d pp, int method, double prob, double threshold) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, in Vec2d pp, int method, double prob, double threshold, int maxIters) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, in Vec2d pp, int method, double prob, double threshold, int maxIters, Mat mask) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, in(double x, double y) pp) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, in(double x, double y) pp, int method) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, in(double x, double y) pp, int method, double prob) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, in(double x, double y) pp, int method, double prob, double threshold) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, in(double x, double y) pp, int method, double prob, double threshold, int maxIters) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, in(double x, double y) pp, int method, double prob, double threshold, int maxIters, Mat mask) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, Point pp) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, Point pp, int method) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, Point pp, int method, double prob) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, Point pp, int method, double prob, double threshold) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, Point pp, int method, double prob, double threshold, int maxIters) |

| static Mat | findEssentialMat (Mat points1, Mat points2, double focal, Point pp, int method, double prob, double threshold, int maxIters, Mat mask) |

| static Mat | findEssentialMat (Mat points1, Mat points2, Mat cameraMatrix) |

| Calculates an essential matrix from the corresponding points in two images. | |

| static Mat | findEssentialMat (Mat points1, Mat points2, Mat cameraMatrix, int method) |

| Calculates an essential matrix from the corresponding points in two images. | |

| static Mat | findEssentialMat (Mat points1, Mat points2, Mat cameraMatrix, int method, double prob) |

| Calculates an essential matrix from the corresponding points in two images. | |

| static Mat | findEssentialMat (Mat points1, Mat points2, Mat cameraMatrix, int method, double prob, double threshold) |

| Calculates an essential matrix from the corresponding points in two images. | |

| static Mat | findEssentialMat (Mat points1, Mat points2, Mat cameraMatrix, int method, double prob, double threshold, int maxIters) |

| Calculates an essential matrix from the corresponding points in two images. | |

| static Mat | findEssentialMat (Mat points1, Mat points2, Mat cameraMatrix, int method, double prob, double threshold, int maxIters, Mat mask) |

| Calculates an essential matrix from the corresponding points in two images. | |

| static Mat | findEssentialMat (Mat points1, Mat points2, Mat cameraMatrix1, Mat cameraMatrix2, Mat dist_coeff1, Mat dist_coeff2, Mat mask, UsacParams _params) |

| static Mat | findEssentialMat (Mat points1, Mat points2, Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2) |

| Calculates an essential matrix from the corresponding points in two images from potentially two different cameras. | |

| static Mat | findEssentialMat (Mat points1, Mat points2, Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2, int method) |

| Calculates an essential matrix from the corresponding points in two images from potentially two different cameras. | |

| static Mat | findEssentialMat (Mat points1, Mat points2, Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2, int method, double prob) |

| Calculates an essential matrix from the corresponding points in two images from potentially two different cameras. | |

| static Mat | findEssentialMat (Mat points1, Mat points2, Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2, int method, double prob, double threshold) |

| Calculates an essential matrix from the corresponding points in two images from potentially two different cameras. | |

| static Mat | findEssentialMat (Mat points1, Mat points2, Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2, int method, double prob, double threshold, Mat mask) |

| Calculates an essential matrix from the corresponding points in two images from potentially two different cameras. | |

| static Mat | findFundamentalMat (MatOfPoint2f points1, MatOfPoint2f points2) |

| static Mat | findFundamentalMat (MatOfPoint2f points1, MatOfPoint2f points2, int method) |

| static Mat | findFundamentalMat (MatOfPoint2f points1, MatOfPoint2f points2, int method, double ransacReprojThreshold) |

| static Mat | findFundamentalMat (MatOfPoint2f points1, MatOfPoint2f points2, int method, double ransacReprojThreshold, double confidence) |

| static Mat | findFundamentalMat (MatOfPoint2f points1, MatOfPoint2f points2, int method, double ransacReprojThreshold, double confidence, int maxIters) |

| Calculates a fundamental matrix from the corresponding points in two images. | |

| static Mat | findFundamentalMat (MatOfPoint2f points1, MatOfPoint2f points2, int method, double ransacReprojThreshold, double confidence, int maxIters, Mat mask) |

| Calculates a fundamental matrix from the corresponding points in two images. | |

| static Mat | findFundamentalMat (MatOfPoint2f points1, MatOfPoint2f points2, int method, double ransacReprojThreshold, double confidence, Mat mask) |

| static Mat | findFundamentalMat (MatOfPoint2f points1, MatOfPoint2f points2, Mat mask, UsacParams _params) |

| static Mat | findHomography (MatOfPoint2f srcPoints, MatOfPoint2f dstPoints) |

| Finds a perspective transformation between two planes. | |

| static Mat | findHomography (MatOfPoint2f srcPoints, MatOfPoint2f dstPoints, int method) |

| Finds a perspective transformation between two planes. | |

| static Mat | findHomography (MatOfPoint2f srcPoints, MatOfPoint2f dstPoints, int method, double ransacReprojThreshold) |

| Finds a perspective transformation between two planes. | |

| static Mat | findHomography (MatOfPoint2f srcPoints, MatOfPoint2f dstPoints, int method, double ransacReprojThreshold, Mat mask) |

| Finds a perspective transformation between two planes. | |

| static Mat | findHomography (MatOfPoint2f srcPoints, MatOfPoint2f dstPoints, int method, double ransacReprojThreshold, Mat mask, int maxIters) |

| Finds a perspective transformation between two planes. | |

| static Mat | findHomography (MatOfPoint2f srcPoints, MatOfPoint2f dstPoints, int method, double ransacReprojThreshold, Mat mask, int maxIters, double confidence) |

| Finds a perspective transformation between two planes. | |

| static Mat | findHomography (MatOfPoint2f srcPoints, MatOfPoint2f dstPoints, Mat mask, UsacParams _params) |

| static double | fisheye_calibrate (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d image_size, Mat K, Mat D, List< Mat > rvecs, List< Mat > tvecs) |

| Performs camera calibration. | |

| static double | fisheye_calibrate (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d image_size, Mat K, Mat D, List< Mat > rvecs, List< Mat > tvecs, int flags) |

| Performs camera calibration. | |

| static double | fisheye_calibrate (List< Mat > objectPoints, List< Mat > imagePoints, in Vec2d image_size, Mat K, Mat D, List< Mat > rvecs, List< Mat > tvecs, int flags, in Vec3d criteria) |

| Performs camera calibration. | |

| static double | fisheye_calibrate (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) image_size, Mat K, Mat D, List< Mat > rvecs, List< Mat > tvecs) |

| Performs camera calibration. | |

| static double | fisheye_calibrate (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) image_size, Mat K, Mat D, List< Mat > rvecs, List< Mat > tvecs, int flags) |

| Performs camera calibration. | |

| static double | fisheye_calibrate (List< Mat > objectPoints, List< Mat > imagePoints, in(double width, double height) image_size, Mat K, Mat D, List< Mat > rvecs, List< Mat > tvecs, int flags, in(double type, double maxCount, double epsilon) criteria) |

| Performs camera calibration. | |

| static double | fisheye_calibrate (List< Mat > objectPoints, List< Mat > imagePoints, Size image_size, Mat K, Mat D, List< Mat > rvecs, List< Mat > tvecs) |

| Performs camera calibration. | |

| static double | fisheye_calibrate (List< Mat > objectPoints, List< Mat > imagePoints, Size image_size, Mat K, Mat D, List< Mat > rvecs, List< Mat > tvecs, int flags) |

| Performs camera calibration. | |

| static double | fisheye_calibrate (List< Mat > objectPoints, List< Mat > imagePoints, Size image_size, Mat K, Mat D, List< Mat > rvecs, List< Mat > tvecs, int flags, TermCriteria criteria) |

| Performs camera calibration. | |

| static void | fisheye_distortPoints (Mat undistorted, Mat distorted, Mat K, Mat D) |

| Distorts 2D points using fisheye model. | |

| static void | fisheye_distortPoints (Mat undistorted, Mat distorted, Mat K, Mat D, double alpha) |

| Distorts 2D points using fisheye model. | |

| static void | fisheye_distortPoints (Mat undistorted, Mat distorted, Mat Kundistorted, Mat K, Mat D) |

| static void | fisheye_distortPoints (Mat undistorted, Mat distorted, Mat Kundistorted, Mat K, Mat D, double alpha) |

| static void | fisheye_estimateNewCameraMatrixForUndistortRectify (Mat K, Mat D, in Vec2d image_size, Mat R, Mat P) |

| Estimates new camera intrinsic matrix for undistortion or rectification. | |

| static void | fisheye_estimateNewCameraMatrixForUndistortRectify (Mat K, Mat D, in Vec2d image_size, Mat R, Mat P, double balance) |

| Estimates new camera intrinsic matrix for undistortion or rectification. | |

| static void | fisheye_estimateNewCameraMatrixForUndistortRectify (Mat K, Mat D, in Vec2d image_size, Mat R, Mat P, double balance, in Vec2d new_size) |

| Estimates new camera intrinsic matrix for undistortion or rectification. | |

| static void | fisheye_estimateNewCameraMatrixForUndistortRectify (Mat K, Mat D, in Vec2d image_size, Mat R, Mat P, double balance, in Vec2d new_size, double fov_scale) |

| Estimates new camera intrinsic matrix for undistortion or rectification. | |

| static void | fisheye_estimateNewCameraMatrixForUndistortRectify (Mat K, Mat D, in(double width, double height) image_size, Mat R, Mat P) |

| Estimates new camera intrinsic matrix for undistortion or rectification. | |

| static void | fisheye_estimateNewCameraMatrixForUndistortRectify (Mat K, Mat D, in(double width, double height) image_size, Mat R, Mat P, double balance) |

| Estimates new camera intrinsic matrix for undistortion or rectification. | |

| static void | fisheye_estimateNewCameraMatrixForUndistortRectify (Mat K, Mat D, in(double width, double height) image_size, Mat R, Mat P, double balance, in(double width, double height) new_size) |

| Estimates new camera intrinsic matrix for undistortion or rectification. | |

| static void | fisheye_estimateNewCameraMatrixForUndistortRectify (Mat K, Mat D, in(double width, double height) image_size, Mat R, Mat P, double balance, in(double width, double height) new_size, double fov_scale) |

| Estimates new camera intrinsic matrix for undistortion or rectification. | |

| static void | fisheye_estimateNewCameraMatrixForUndistortRectify (Mat K, Mat D, Size image_size, Mat R, Mat P) |

| Estimates new camera intrinsic matrix for undistortion or rectification. | |

| static void | fisheye_estimateNewCameraMatrixForUndistortRectify (Mat K, Mat D, Size image_size, Mat R, Mat P, double balance) |

| Estimates new camera intrinsic matrix for undistortion or rectification. | |

| static void | fisheye_estimateNewCameraMatrixForUndistortRectify (Mat K, Mat D, Size image_size, Mat R, Mat P, double balance, Size new_size) |

| Estimates new camera intrinsic matrix for undistortion or rectification. | |

| static void | fisheye_estimateNewCameraMatrixForUndistortRectify (Mat K, Mat D, Size image_size, Mat R, Mat P, double balance, Size new_size, double fov_scale) |

| Estimates new camera intrinsic matrix for undistortion or rectification. | |

| static void | fisheye_initUndistortRectifyMap (Mat K, Mat D, Mat R, Mat P, in Vec2d size, int m1type, Mat map1, Mat map2) |

| Computes undistortion and rectification maps for image transform by #remap. If D is empty zero distortion is used, if R or P is empty identity matrixes are used. | |

| static void | fisheye_initUndistortRectifyMap (Mat K, Mat D, Mat R, Mat P, in(double width, double height) size, int m1type, Mat map1, Mat map2) |

| Computes undistortion and rectification maps for image transform by #remap. If D is empty zero distortion is used, if R or P is empty identity matrixes are used. | |

| static void | fisheye_initUndistortRectifyMap (Mat K, Mat D, Mat R, Mat P, Size size, int m1type, Mat map1, Mat map2) |

| Computes undistortion and rectification maps for image transform by #remap. If D is empty zero distortion is used, if R or P is empty identity matrixes are used. | |

| static void | fisheye_projectPoints (Mat objectPoints, Mat imagePoints, Mat rvec, Mat tvec, Mat K, Mat D) |

| static void | fisheye_projectPoints (Mat objectPoints, Mat imagePoints, Mat rvec, Mat tvec, Mat K, Mat D, double alpha) |

| static void | fisheye_projectPoints (Mat objectPoints, Mat imagePoints, Mat rvec, Mat tvec, Mat K, Mat D, double alpha, Mat jacobian) |

| static bool | fisheye_solvePnP (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec) |

| Finds an object pose from 3D-2D point correspondences for fisheye camera model. | |

| static bool | fisheye_solvePnP (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess) |

| Finds an object pose from 3D-2D point correspondences for fisheye camera model. | |

| static bool | fisheye_solvePnP (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int flags) |

| Finds an object pose from 3D-2D point correspondences for fisheye camera model. | |

| static bool | fisheye_solvePnP (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int flags, in Vec3d criteria) |

| Finds an object pose from 3D-2D point correspondences for fisheye camera model. | |

| static bool | fisheye_solvePnP (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int flags, in(double type, double maxCount, double epsilon) criteria) |

| Finds an object pose from 3D-2D point correspondences for fisheye camera model. | |

| static bool | fisheye_solvePnP (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int flags, TermCriteria criteria) |

| Finds an object pose from 3D-2D point correspondences for fisheye camera model. | |

| static bool | fisheye_solvePnPRansac (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec) |

| Finds an object pose from 3D-2D point correspondences using the RANSAC scheme for fisheye camera moodel. | |

| static bool | fisheye_solvePnPRansac (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess) |

| Finds an object pose from 3D-2D point correspondences using the RANSAC scheme for fisheye camera moodel. | |

| static bool | fisheye_solvePnPRansac (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int iterationsCount) |

| Finds an object pose from 3D-2D point correspondences using the RANSAC scheme for fisheye camera moodel. | |

| static bool | fisheye_solvePnPRansac (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int iterationsCount, float reprojectionError) |

| Finds an object pose from 3D-2D point correspondences using the RANSAC scheme for fisheye camera moodel. | |

| static bool | fisheye_solvePnPRansac (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int iterationsCount, float reprojectionError, double confidence) |

| Finds an object pose from 3D-2D point correspondences using the RANSAC scheme for fisheye camera moodel. | |

| static bool | fisheye_solvePnPRansac (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int iterationsCount, float reprojectionError, double confidence, Mat inliers) |

| Finds an object pose from 3D-2D point correspondences using the RANSAC scheme for fisheye camera moodel. | |

| static bool | fisheye_solvePnPRansac (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int iterationsCount, float reprojectionError, double confidence, Mat inliers, int flags) |

| Finds an object pose from 3D-2D point correspondences using the RANSAC scheme for fisheye camera moodel. | |

| static bool | fisheye_solvePnPRansac (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int iterationsCount, float reprojectionError, double confidence, Mat inliers, int flags, in Vec3d criteria) |

| Finds an object pose from 3D-2D point correspondences using the RANSAC scheme for fisheye camera moodel. | |

| static bool | fisheye_solvePnPRansac (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int iterationsCount, float reprojectionError, double confidence, Mat inliers, int flags, in(double type, double maxCount, double epsilon) criteria) |

| Finds an object pose from 3D-2D point correspondences using the RANSAC scheme for fisheye camera moodel. | |

| static bool | fisheye_solvePnPRansac (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int iterationsCount, float reprojectionError, double confidence, Mat inliers, int flags, TermCriteria criteria) |

| Finds an object pose from 3D-2D point correspondences using the RANSAC scheme for fisheye camera moodel. | |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, in Vec2d imageSize, Mat R, Mat T) |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, in Vec2d imageSize, Mat R, Mat T, int flags) |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, in Vec2d imageSize, Mat R, Mat T, int flags, in Vec3d criteria) |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, in Vec2d imageSize, Mat R, Mat T, List< Mat > rvecs, List< Mat > tvecs) |

| Performs stereo calibration. | |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, in Vec2d imageSize, Mat R, Mat T, List< Mat > rvecs, List< Mat > tvecs, int flags) |

| Performs stereo calibration. | |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, in Vec2d imageSize, Mat R, Mat T, List< Mat > rvecs, List< Mat > tvecs, int flags, in Vec3d criteria) |

| Performs stereo calibration. | |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, in(double width, double height) imageSize, Mat R, Mat T) |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, in(double width, double height) imageSize, Mat R, Mat T, int flags) |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, in(double width, double height) imageSize, Mat R, Mat T, int flags, in(double type, double maxCount, double epsilon) criteria) |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, in(double width, double height) imageSize, Mat R, Mat T, List< Mat > rvecs, List< Mat > tvecs) |

| Performs stereo calibration. | |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, in(double width, double height) imageSize, Mat R, Mat T, List< Mat > rvecs, List< Mat > tvecs, int flags) |

| Performs stereo calibration. | |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, in(double width, double height) imageSize, Mat R, Mat T, List< Mat > rvecs, List< Mat > tvecs, int flags, in(double type, double maxCount, double epsilon) criteria) |

| Performs stereo calibration. | |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, Size imageSize, Mat R, Mat T) |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, Size imageSize, Mat R, Mat T, int flags) |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, Size imageSize, Mat R, Mat T, int flags, TermCriteria criteria) |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, Size imageSize, Mat R, Mat T, List< Mat > rvecs, List< Mat > tvecs) |

| Performs stereo calibration. | |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, Size imageSize, Mat R, Mat T, List< Mat > rvecs, List< Mat > tvecs, int flags) |

| Performs stereo calibration. | |

| static double | fisheye_stereoCalibrate (List< Mat > objectPoints, List< Mat > imagePoints1, List< Mat > imagePoints2, Mat K1, Mat D1, Mat K2, Mat D2, Size imageSize, Mat R, Mat T, List< Mat > rvecs, List< Mat > tvecs, int flags, TermCriteria criteria) |

| Performs stereo calibration. | |

| static void | fisheye_stereoRectify (Mat K1, Mat D1, Mat K2, Mat D2, in Vec2d imageSize, Mat R, Mat tvec, Mat R1, Mat R2, Mat P1, Mat P2, Mat Q, int flags) |

| Stereo rectification for fisheye camera model. | |

| static void | fisheye_stereoRectify (Mat K1, Mat D1, Mat K2, Mat D2, in Vec2d imageSize, Mat R, Mat tvec, Mat R1, Mat R2, Mat P1, Mat P2, Mat Q, int flags, in Vec2d newImageSize) |

| Stereo rectification for fisheye camera model. | |

| static void | fisheye_stereoRectify (Mat K1, Mat D1, Mat K2, Mat D2, in Vec2d imageSize, Mat R, Mat tvec, Mat R1, Mat R2, Mat P1, Mat P2, Mat Q, int flags, in Vec2d newImageSize, double balance) |

| Stereo rectification for fisheye camera model. | |

| static void | fisheye_stereoRectify (Mat K1, Mat D1, Mat K2, Mat D2, in Vec2d imageSize, Mat R, Mat tvec, Mat R1, Mat R2, Mat P1, Mat P2, Mat Q, int flags, in Vec2d newImageSize, double balance, double fov_scale) |

| Stereo rectification for fisheye camera model. | |

| static void | fisheye_stereoRectify (Mat K1, Mat D1, Mat K2, Mat D2, in(double width, double height) imageSize, Mat R, Mat tvec, Mat R1, Mat R2, Mat P1, Mat P2, Mat Q, int flags) |

| Stereo rectification for fisheye camera model. | |

| static void | fisheye_stereoRectify (Mat K1, Mat D1, Mat K2, Mat D2, in(double width, double height) imageSize, Mat R, Mat tvec, Mat R1, Mat R2, Mat P1, Mat P2, Mat Q, int flags, in(double width, double height) newImageSize) |

| Stereo rectification for fisheye camera model. | |

| static void | fisheye_stereoRectify (Mat K1, Mat D1, Mat K2, Mat D2, in(double width, double height) imageSize, Mat R, Mat tvec, Mat R1, Mat R2, Mat P1, Mat P2, Mat Q, int flags, in(double width, double height) newImageSize, double balance) |

| Stereo rectification for fisheye camera model. | |

| static void | fisheye_stereoRectify (Mat K1, Mat D1, Mat K2, Mat D2, in(double width, double height) imageSize, Mat R, Mat tvec, Mat R1, Mat R2, Mat P1, Mat P2, Mat Q, int flags, in(double width, double height) newImageSize, double balance, double fov_scale) |

| Stereo rectification for fisheye camera model. | |

| static void | fisheye_stereoRectify (Mat K1, Mat D1, Mat K2, Mat D2, Size imageSize, Mat R, Mat tvec, Mat R1, Mat R2, Mat P1, Mat P2, Mat Q, int flags) |

| Stereo rectification for fisheye camera model. | |

| static void | fisheye_stereoRectify (Mat K1, Mat D1, Mat K2, Mat D2, Size imageSize, Mat R, Mat tvec, Mat R1, Mat R2, Mat P1, Mat P2, Mat Q, int flags, Size newImageSize) |

| Stereo rectification for fisheye camera model. | |

| static void | fisheye_stereoRectify (Mat K1, Mat D1, Mat K2, Mat D2, Size imageSize, Mat R, Mat tvec, Mat R1, Mat R2, Mat P1, Mat P2, Mat Q, int flags, Size newImageSize, double balance) |

| Stereo rectification for fisheye camera model. | |

| static void | fisheye_stereoRectify (Mat K1, Mat D1, Mat K2, Mat D2, Size imageSize, Mat R, Mat tvec, Mat R1, Mat R2, Mat P1, Mat P2, Mat Q, int flags, Size newImageSize, double balance, double fov_scale) |

| Stereo rectification for fisheye camera model. | |

| static void | fisheye_undistortImage (Mat distorted, Mat undistorted, Mat K, Mat D) |

| Transforms an image to compensate for fisheye lens distortion. | |

| static void | fisheye_undistortImage (Mat distorted, Mat undistorted, Mat K, Mat D, Mat Knew) |

| Transforms an image to compensate for fisheye lens distortion. | |

| static void | fisheye_undistortImage (Mat distorted, Mat undistorted, Mat K, Mat D, Mat Knew, in Vec2d new_size) |

| Transforms an image to compensate for fisheye lens distortion. | |

| static void | fisheye_undistortImage (Mat distorted, Mat undistorted, Mat K, Mat D, Mat Knew, in(double width, double height) new_size) |

| Transforms an image to compensate for fisheye lens distortion. | |

| static void | fisheye_undistortImage (Mat distorted, Mat undistorted, Mat K, Mat D, Mat Knew, Size new_size) |

| Transforms an image to compensate for fisheye lens distortion. | |

| static void | fisheye_undistortPoints (Mat distorted, Mat undistorted, Mat K, Mat D) |

| Undistorts 2D points using fisheye model. | |

| static void | fisheye_undistortPoints (Mat distorted, Mat undistorted, Mat K, Mat D, Mat R) |

| Undistorts 2D points using fisheye model. | |

| static void | fisheye_undistortPoints (Mat distorted, Mat undistorted, Mat K, Mat D, Mat R, Mat P) |

| Undistorts 2D points using fisheye model. | |

| static void | fisheye_undistortPoints (Mat distorted, Mat undistorted, Mat K, Mat D, Mat R, Mat P, in Vec3d criteria) |

| Undistorts 2D points using fisheye model. | |

| static void | fisheye_undistortPoints (Mat distorted, Mat undistorted, Mat K, Mat D, Mat R, Mat P, in(double type, double maxCount, double epsilon) criteria) |

| Undistorts 2D points using fisheye model. | |

| static void | fisheye_undistortPoints (Mat distorted, Mat undistorted, Mat K, Mat D, Mat R, Mat P, TermCriteria criteria) |

| Undistorts 2D points using fisheye model. | |

| static Mat | getDefaultNewCameraMatrix (Mat cameraMatrix) |

| Returns the default new camera matrix. | |

| static Mat | getDefaultNewCameraMatrix (Mat cameraMatrix, in Vec2d imgsize) |

| Returns the default new camera matrix. | |

| static Mat | getDefaultNewCameraMatrix (Mat cameraMatrix, in Vec2d imgsize, bool centerPrincipalPoint) |

| Returns the default new camera matrix. | |

| static Mat | getDefaultNewCameraMatrix (Mat cameraMatrix, in(double width, double height) imgsize) |

| Returns the default new camera matrix. | |

| static Mat | getDefaultNewCameraMatrix (Mat cameraMatrix, in(double width, double height) imgsize, bool centerPrincipalPoint) |

| Returns the default new camera matrix. | |

| static Mat | getDefaultNewCameraMatrix (Mat cameraMatrix, Size imgsize) |

| Returns the default new camera matrix. | |

| static Mat | getDefaultNewCameraMatrix (Mat cameraMatrix, Size imgsize, bool centerPrincipalPoint) |

| Returns the default new camera matrix. | |

| static Mat | getOptimalNewCameraMatrix (Mat cameraMatrix, Mat distCoeffs, in Vec2d imageSize, double alpha) |

| Returns the new camera intrinsic matrix based on the free scaling parameter. | |

| static Mat | getOptimalNewCameraMatrix (Mat cameraMatrix, Mat distCoeffs, in Vec2d imageSize, double alpha, in Vec2d newImgSize) |

| Returns the new camera intrinsic matrix based on the free scaling parameter. | |

| static Mat | getOptimalNewCameraMatrix (Mat cameraMatrix, Mat distCoeffs, in Vec2d imageSize, double alpha, in Vec2d newImgSize, out Vec4i validPixROI) |

| Returns the new camera intrinsic matrix based on the free scaling parameter. | |

| static Mat | getOptimalNewCameraMatrix (Mat cameraMatrix, Mat distCoeffs, in Vec2d imageSize, double alpha, in Vec2d newImgSize, out Vec4i validPixROI, bool centerPrincipalPoint) |

| Returns the new camera intrinsic matrix based on the free scaling parameter. | |

| static Mat | getOptimalNewCameraMatrix (Mat cameraMatrix, Mat distCoeffs, in(double width, double height) imageSize, double alpha) |

| Returns the new camera intrinsic matrix based on the free scaling parameter. | |

| static Mat | getOptimalNewCameraMatrix (Mat cameraMatrix, Mat distCoeffs, in(double width, double height) imageSize, double alpha, in(double width, double height) newImgSize) |

| Returns the new camera intrinsic matrix based on the free scaling parameter. | |

| static Mat | getOptimalNewCameraMatrix (Mat cameraMatrix, Mat distCoeffs, in(double width, double height) imageSize, double alpha, in(double width, double height) newImgSize, out(int x, int y, int width, int height) validPixROI) |

| Returns the new camera intrinsic matrix based on the free scaling parameter. | |

| static Mat | getOptimalNewCameraMatrix (Mat cameraMatrix, Mat distCoeffs, in(double width, double height) imageSize, double alpha, in(double width, double height) newImgSize, out(int x, int y, int width, int height) validPixROI, bool centerPrincipalPoint) |

| Returns the new camera intrinsic matrix based on the free scaling parameter. | |

| static Mat | getOptimalNewCameraMatrix (Mat cameraMatrix, Mat distCoeffs, Size imageSize, double alpha) |

| Returns the new camera intrinsic matrix based on the free scaling parameter. | |

| static Mat | getOptimalNewCameraMatrix (Mat cameraMatrix, Mat distCoeffs, Size imageSize, double alpha, Size newImgSize) |

| Returns the new camera intrinsic matrix based on the free scaling parameter. | |

| static Mat | getOptimalNewCameraMatrix (Mat cameraMatrix, Mat distCoeffs, Size imageSize, double alpha, Size newImgSize, Rect validPixROI) |

| Returns the new camera intrinsic matrix based on the free scaling parameter. | |

| static Mat | getOptimalNewCameraMatrix (Mat cameraMatrix, Mat distCoeffs, Size imageSize, double alpha, Size newImgSize, Rect validPixROI, bool centerPrincipalPoint) |

| Returns the new camera intrinsic matrix based on the free scaling parameter. | |

| static Rect | getValidDisparityROI (Rect roi1, Rect roi2, int minDisparity, int numberOfDisparities, int blockSize) |

| static int int int int height | getValidDisparityROIAsValueTuple (in(int x, int y, int width, int height) roi1,(int x, int y, int width, int height) roi2, int minDisparity, int numberOfDisparities, int blockSize) |

| static Vec4i | getValidDisparityROIAsVec4i (in Vec4i roi1, Vec4i roi2, int minDisparity, int numberOfDisparities, int blockSize) |

| static Mat | initCameraMatrix2D (List< MatOfPoint3f > objectPoints, List< MatOfPoint2f > imagePoints, in Vec2d imageSize) |

| Finds an initial camera intrinsic matrix from 3D-2D point correspondences. | |

| static Mat | initCameraMatrix2D (List< MatOfPoint3f > objectPoints, List< MatOfPoint2f > imagePoints, in Vec2d imageSize, double aspectRatio) |

| Finds an initial camera intrinsic matrix from 3D-2D point correspondences. | |

| static Mat | initCameraMatrix2D (List< MatOfPoint3f > objectPoints, List< MatOfPoint2f > imagePoints, in(double width, double height) imageSize) |

| Finds an initial camera intrinsic matrix from 3D-2D point correspondences. | |

| static Mat | initCameraMatrix2D (List< MatOfPoint3f > objectPoints, List< MatOfPoint2f > imagePoints, in(double width, double height) imageSize, double aspectRatio) |

| Finds an initial camera intrinsic matrix from 3D-2D point correspondences. | |

| static Mat | initCameraMatrix2D (List< MatOfPoint3f > objectPoints, List< MatOfPoint2f > imagePoints, Size imageSize) |

| Finds an initial camera intrinsic matrix from 3D-2D point correspondences. | |

| static Mat | initCameraMatrix2D (List< MatOfPoint3f > objectPoints, List< MatOfPoint2f > imagePoints, Size imageSize, double aspectRatio) |

| Finds an initial camera intrinsic matrix from 3D-2D point correspondences. | |

| static void | initInverseRectificationMap (Mat cameraMatrix, Mat distCoeffs, Mat R, Mat newCameraMatrix, in Vec2d size, int m1type, Mat map1, Mat map2) |

| Computes the projection and inverse-rectification transformation map. In essense, this is the inverse of initUndistortRectifyMap to accomodate stereo-rectification of projectors ('inverse-cameras') in projector-camera pairs. | |

| static void | initInverseRectificationMap (Mat cameraMatrix, Mat distCoeffs, Mat R, Mat newCameraMatrix, in(double width, double height) size, int m1type, Mat map1, Mat map2) |

| Computes the projection and inverse-rectification transformation map. In essense, this is the inverse of initUndistortRectifyMap to accomodate stereo-rectification of projectors ('inverse-cameras') in projector-camera pairs. | |

| static void | initInverseRectificationMap (Mat cameraMatrix, Mat distCoeffs, Mat R, Mat newCameraMatrix, Size size, int m1type, Mat map1, Mat map2) |

| Computes the projection and inverse-rectification transformation map. In essense, this is the inverse of initUndistortRectifyMap to accomodate stereo-rectification of projectors ('inverse-cameras') in projector-camera pairs. | |

| static void | initUndistortRectifyMap (Mat cameraMatrix, Mat distCoeffs, Mat R, Mat newCameraMatrix, in Vec2d size, int m1type, Mat map1, Mat map2) |

| Computes the undistortion and rectification transformation map. | |

| static void | initUndistortRectifyMap (Mat cameraMatrix, Mat distCoeffs, Mat R, Mat newCameraMatrix, in(double width, double height) size, int m1type, Mat map1, Mat map2) |

| Computes the undistortion and rectification transformation map. | |

| static void | initUndistortRectifyMap (Mat cameraMatrix, Mat distCoeffs, Mat R, Mat newCameraMatrix, Size size, int m1type, Mat map1, Mat map2) |

| Computes the undistortion and rectification transformation map. | |

| static void | matMulDeriv (Mat A, Mat B, Mat dABdA, Mat dABdB) |

| Computes partial derivatives of the matrix product for each multiplied matrix. | |

| static void | projectPoints (MatOfPoint3f objectPoints, Mat rvec, Mat tvec, Mat cameraMatrix, MatOfDouble distCoeffs, MatOfPoint2f imagePoints) |

| Projects 3D points to an image plane. | |

| static void | projectPoints (MatOfPoint3f objectPoints, Mat rvec, Mat tvec, Mat cameraMatrix, MatOfDouble distCoeffs, MatOfPoint2f imagePoints, Mat jacobian) |

| Projects 3D points to an image plane. | |

| static void | projectPoints (MatOfPoint3f objectPoints, Mat rvec, Mat tvec, Mat cameraMatrix, MatOfDouble distCoeffs, MatOfPoint2f imagePoints, Mat jacobian, double aspectRatio) |

| Projects 3D points to an image plane. | |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat cameraMatrix, Mat R, Mat t) |

| Recovers the relative camera rotation and the translation from an estimated essential matrix and the corresponding points in two images, using chirality check. Returns the number of inliers that pass the check. | |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat cameraMatrix, Mat R, Mat t, double distanceThresh) |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat cameraMatrix, Mat R, Mat t, double distanceThresh, Mat mask) |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat cameraMatrix, Mat R, Mat t, double distanceThresh, Mat mask, Mat triangulatedPoints) |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat cameraMatrix, Mat R, Mat t, Mat mask) |

| Recovers the relative camera rotation and the translation from an estimated essential matrix and the corresponding points in two images, using chirality check. Returns the number of inliers that pass the check. | |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat R, Mat t) |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat R, Mat t, double focal) |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat R, Mat t, double focal, in Vec2d pp) |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat R, Mat t, double focal, in Vec2d pp, Mat mask) |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat R, Mat t, double focal, in(double x, double y) pp) |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat R, Mat t, double focal, in(double x, double y) pp, Mat mask) |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat R, Mat t, double focal, Point pp) |

| static int | recoverPose (Mat E, Mat points1, Mat points2, Mat R, Mat t, double focal, Point pp, Mat mask) |

| static int | recoverPose (Mat points1, Mat points2, Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2, Mat E, Mat R, Mat t) |

| Recovers the relative camera rotation and the translation from corresponding points in two images from two different cameras, using cheirality check. Returns the number of inliers that pass the check. | |

| static int | recoverPose (Mat points1, Mat points2, Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2, Mat E, Mat R, Mat t, int method) |

| Recovers the relative camera rotation and the translation from corresponding points in two images from two different cameras, using cheirality check. Returns the number of inliers that pass the check. | |

| static int | recoverPose (Mat points1, Mat points2, Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2, Mat E, Mat R, Mat t, int method, double prob) |

| Recovers the relative camera rotation and the translation from corresponding points in two images from two different cameras, using cheirality check. Returns the number of inliers that pass the check. | |

| static int | recoverPose (Mat points1, Mat points2, Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2, Mat E, Mat R, Mat t, int method, double prob, double threshold) |

| Recovers the relative camera rotation and the translation from corresponding points in two images from two different cameras, using cheirality check. Returns the number of inliers that pass the check. | |

| static int | recoverPose (Mat points1, Mat points2, Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2, Mat E, Mat R, Mat t, int method, double prob, double threshold, Mat mask) |

| Recovers the relative camera rotation and the translation from corresponding points in two images from two different cameras, using cheirality check. Returns the number of inliers that pass the check. | |

| static float | rectify3Collinear (Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2, Mat cameraMatrix3, Mat distCoeffs3, List< Mat > imgpt1, List< Mat > imgpt3, in Vec2d imageSize, Mat R12, Mat T12, Mat R13, Mat T13, Mat R1, Mat R2, Mat R3, Mat P1, Mat P2, Mat P3, Mat Q, double alpha, in Vec2d newImgSize, out Vec4i roi1, out Vec4i roi2, int flags) |

| static float | rectify3Collinear (Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2, Mat cameraMatrix3, Mat distCoeffs3, List< Mat > imgpt1, List< Mat > imgpt3, in(double width, double height) imageSize, Mat R12, Mat T12, Mat R13, Mat T13, Mat R1, Mat R2, Mat R3, Mat P1, Mat P2, Mat P3, Mat Q, double alpha, in(double width, double height) newImgSize, out(int x, int y, int width, int height) roi1, out(int x, int y, int width, int height) roi2, int flags) |

| static float | rectify3Collinear (Mat cameraMatrix1, Mat distCoeffs1, Mat cameraMatrix2, Mat distCoeffs2, Mat cameraMatrix3, Mat distCoeffs3, List< Mat > imgpt1, List< Mat > imgpt3, Size imageSize, Mat R12, Mat T12, Mat R13, Mat T13, Mat R1, Mat R2, Mat R3, Mat P1, Mat P2, Mat P3, Mat Q, double alpha, Size newImgSize, Rect roi1, Rect roi2, int flags) |

| static void | reprojectImageTo3D (Mat disparity, Mat _3dImage, Mat Q) |

| Reprojects a disparity image to 3D space. | |

| static void | reprojectImageTo3D (Mat disparity, Mat _3dImage, Mat Q, bool handleMissingValues) |

| Reprojects a disparity image to 3D space. | |

| static void | reprojectImageTo3D (Mat disparity, Mat _3dImage, Mat Q, bool handleMissingValues, int ddepth) |

| Reprojects a disparity image to 3D space. | |

| static void | Rodrigues (Mat src, Mat dst) |

| Converts a rotation matrix to a rotation vector or vice versa. | |

| static void | Rodrigues (Mat src, Mat dst, Mat jacobian) |

| Converts a rotation matrix to a rotation vector or vice versa. | |

| static double[] | RQDecomp3x3 (Mat src, Mat mtxR, Mat mtxQ) |

| Computes an RQ decomposition of 3x3 matrices. | |

| static double[] | RQDecomp3x3 (Mat src, Mat mtxR, Mat mtxQ, Mat Qx) |

| Computes an RQ decomposition of 3x3 matrices. | |

| static double[] | RQDecomp3x3 (Mat src, Mat mtxR, Mat mtxQ, Mat Qx, Mat Qy) |

| Computes an RQ decomposition of 3x3 matrices. | |

| static double[] | RQDecomp3x3 (Mat src, Mat mtxR, Mat mtxQ, Mat Qx, Mat Qy, Mat Qz) |

| Computes an RQ decomposition of 3x3 matrices. | |

| static double | sampsonDistance (Mat pt1, Mat pt2, Mat F) |

| Calculates the Sampson Distance between two points. | |

| static int | solveP3P (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, int flags) |

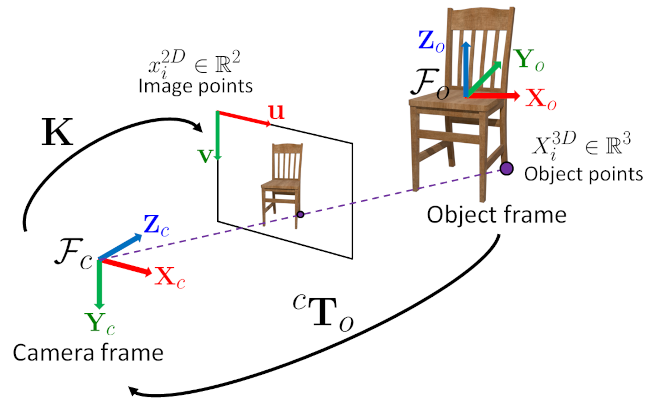

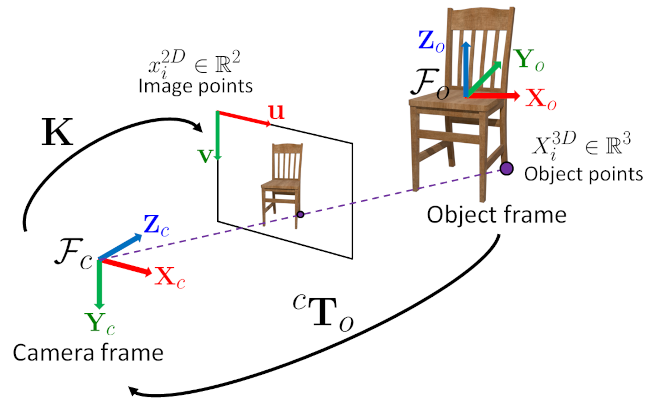

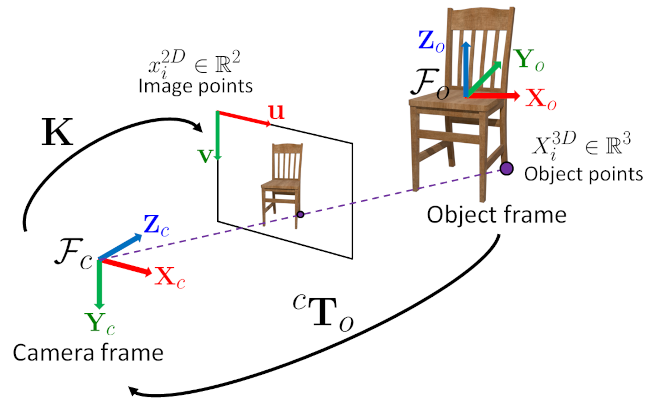

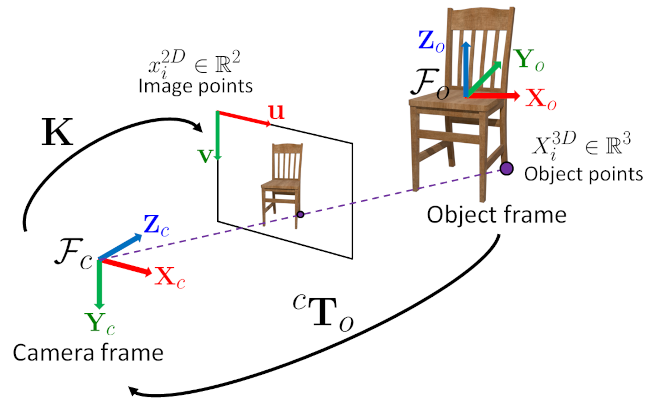

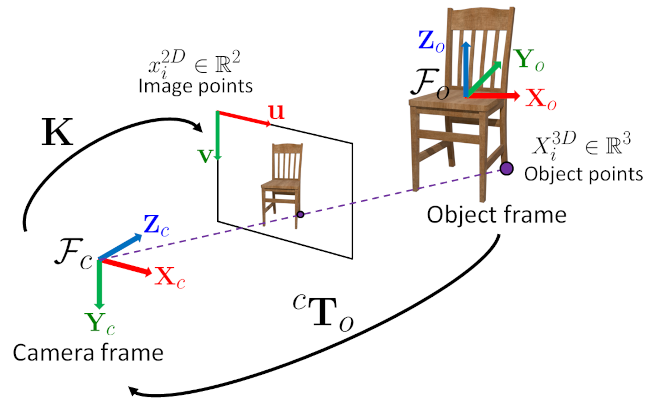

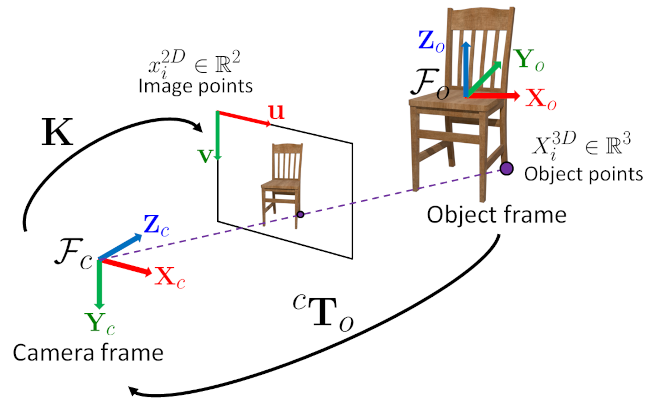

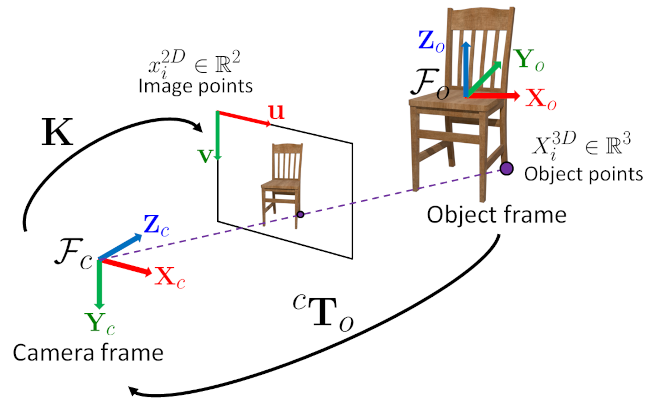

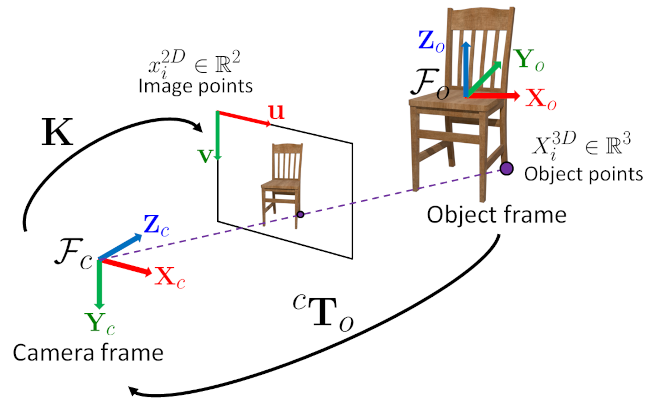

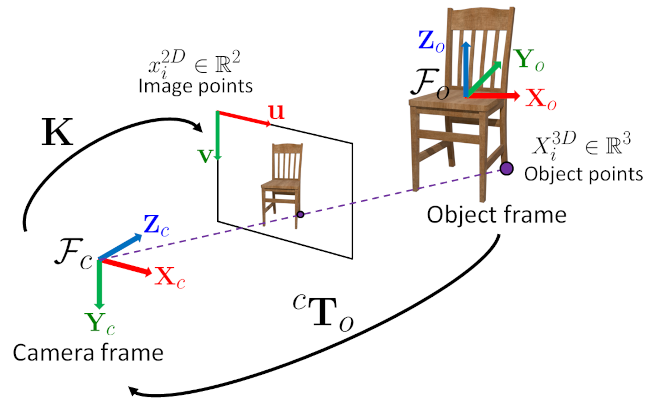

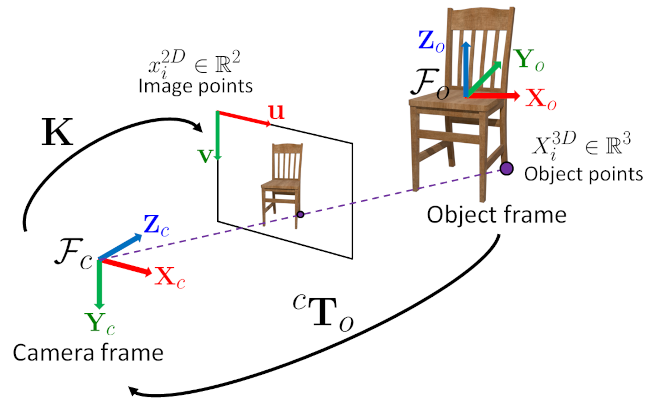

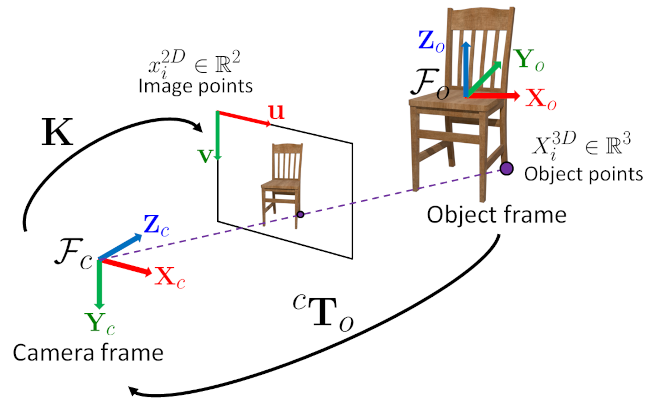

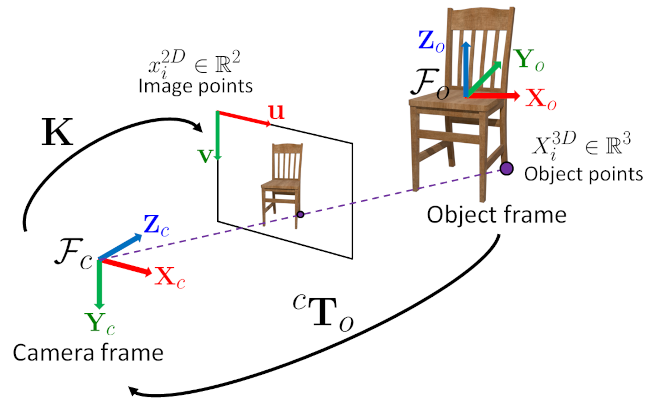

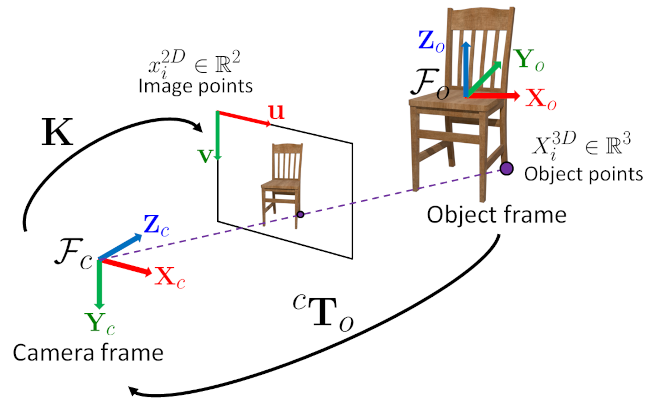

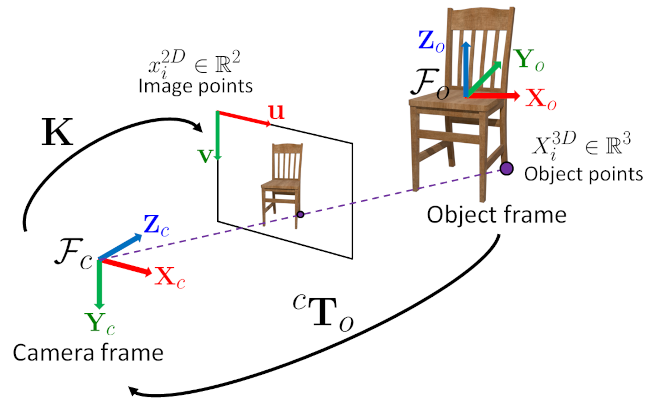

| Finds an object pose \( {}^{c}\mathbf{T}_o \) from 3 3D-2D point correspondences. | |

| static bool | solvePnP (MatOfPoint3f objectPoints, MatOfPoint2f imagePoints, Mat cameraMatrix, MatOfDouble distCoeffs, Mat rvec, Mat tvec) |

| Finds an object pose \( {}^{c}\mathbf{T}_o \) from 3D-2D point correspondences: | |

| static bool | solvePnP (MatOfPoint3f objectPoints, MatOfPoint2f imagePoints, Mat cameraMatrix, MatOfDouble distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess) |

| Finds an object pose \( {}^{c}\mathbf{T}_o \) from 3D-2D point correspondences: | |

| static bool | solvePnP (MatOfPoint3f objectPoints, MatOfPoint2f imagePoints, Mat cameraMatrix, MatOfDouble distCoeffs, Mat rvec, Mat tvec, bool useExtrinsicGuess, int flags) |

| Finds an object pose \( {}^{c}\mathbf{T}_o \) from 3D-2D point correspondences: | |

| static int | solvePnPGeneric (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs) |

| Finds an object pose \( {}^{c}\mathbf{T}_o \) from 3D-2D point correspondences. | |

| static int | solvePnPGeneric (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, bool useExtrinsicGuess) |

| Finds an object pose \( {}^{c}\mathbf{T}_o \) from 3D-2D point correspondences. | |

| static int | solvePnPGeneric (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, bool useExtrinsicGuess, int flags) |

| Finds an object pose \( {}^{c}\mathbf{T}_o \) from 3D-2D point correspondences. | |

| static int | solvePnPGeneric (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, bool useExtrinsicGuess, int flags, Mat rvec) |

| Finds an object pose \( {}^{c}\mathbf{T}_o \) from 3D-2D point correspondences. | |

| static int | solvePnPGeneric (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, bool useExtrinsicGuess, int flags, Mat rvec, Mat tvec) |

| Finds an object pose \( {}^{c}\mathbf{T}_o \) from 3D-2D point correspondences. | |

| static int | solvePnPGeneric (Mat objectPoints, Mat imagePoints, Mat cameraMatrix, Mat distCoeffs, List< Mat > rvecs, List< Mat > tvecs, bool useExtrinsicGuess, int flags, Mat rvec, Mat tvec, Mat reprojectionError) |

| Finds an object pose \( {}^{c}\mathbf{T}_o \) from 3D-2D point correspondences. | |

| static bool | solvePnPRansac (MatOfPoint3f objectPoints, MatOfPoint2f imagePoints, Mat cameraMatrix, MatOfDouble distCoeffs, Mat rvec, Mat tvec) |